Sync SEO Data + Content + AI Insights: 3 Workflows

Tools to Synchronize SEO Data, Content, and AI Insights: 3 Real-World Workflows (and What Actually Changes)

Most teams searching for tools to synchronize SEO data, content, and AI insights aren’t really shopping for “one more platform.” They’re trying to stop the churn: exporting reports, rewriting briefs, rechecking metadata, and arguing about what moved the needle—because the work and the results live in different places.

This is an operations problem. When data, content production, publishing, and measurement don’t connect end-to-end, you get an Operations Gap: decisions are made with partial context, execution is delayed by handoffs, and outcomes are hard to attribute.

If you want the mechanism behind a unified approach, start with how the Connectivity Suite works to unify your SEO stack—then come back to the workflows below to see what “synchronization” looks like in practice.

The real problem isn’t “more tools”—it’s the Operations Gap

What “synchronizing SEO data, content, and AI insights” actually means (in plain terms)

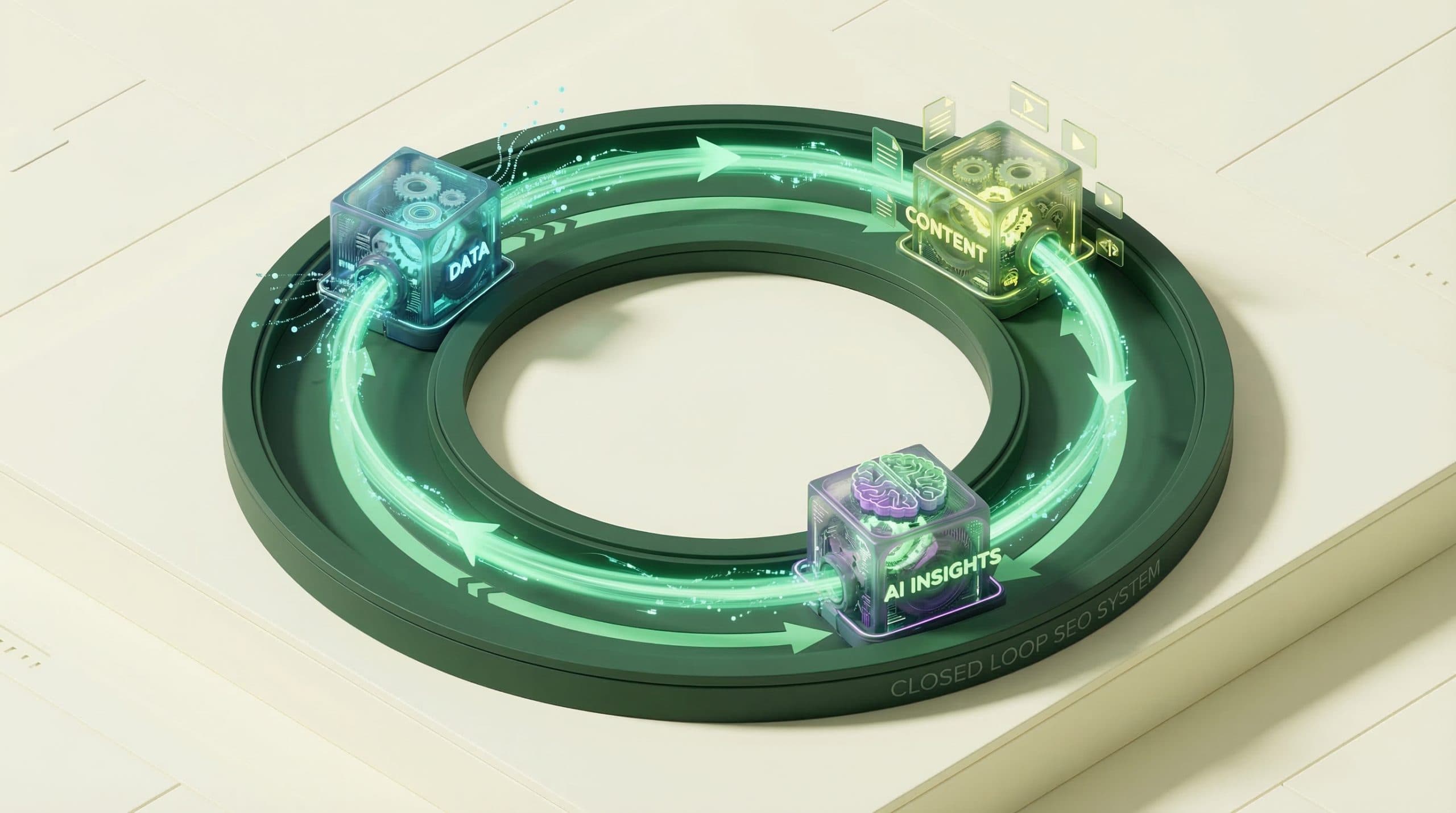

Synchronization is not a dashboard. It’s a closed loop:

-

Signals (search performance, indexation health, site changes, commerce signals) flow into the same operational context where work is planned.

-

Actions (briefs, drafts, on-page updates, visuals, metadata, internal links) move through a consistent workflow to publishing.

-

Measurement connects back to the specific actions taken—so you can learn what worked and repeat it.

When that loop is reliable, AI becomes more than an output generator. It becomes a decision accelerator: it helps you choose what to do next, execute it quickly, and validate impact faster.

The 3 failure modes that make teams feel stuck (data silos, manual handoffs, unclear ROI)

-

Data silos: SEO data sits in one place, content planning in another, publishing in a CMS, revenue or conversions somewhere else. The “truth” depends on who made the last export.

-

Manual handoffs: SEO research → content brief → draft → visuals → publish becomes a game of tickets, Slack threads, doc versions, and rework.

-

Unclear ROI: Even when rankings or clicks improve, teams can’t confidently connect “we did X” to “Y changed,” so prioritization becomes opinion-driven.

How a Connectivity Suite changes the workflow (not just the tech stack)

Two-way integrations vs one-way exports (why it matters for speed and trust)

Most “stacks” are held together by one-way exports: pull data, paste it into a sheet, manually interpret it, then ask someone else to execute changes elsewhere.

A Connectivity Suite mindset is different: it’s about reducing distance between insight and action. The best operational setups do two things well:

-

Keep inputs current (so you’re not acting on stale reports).

-

Make actions traceable (so you can audit what changed, when, and why).

Even if every system can’t be fully bi-directional, the workflow should still be designed so that teams don’t have to “recreate reality” in spreadsheets to get work shipped.

What to connect first: CMS, webmaster tools, and commerce signals

If you’re trying to close the Operations Gap quickly, start with the highest-friction handoff. For many teams, that’s:

-

Your CMS publishing workflow (where changes actually go live—e.g., WordPress for many sites).

-

A webmaster tool signal (for crawl/indexation and search visibility signals—e.g., Bing Webmaster Tools where applicable).

-

Commerce signals if you’re on an eCommerce stack (e.g., WooCommerce for many WordPress stores).

Note: Integration availability varies by stack. Don’t design your workflow around assumptions—design it around what your systems can reliably connect today, then expand.

Case Example 1 — From “spreadsheet SEO” to a single source of truth

Starting point (symptoms, tools, handoffs)

Symptoms: weekly reporting takes hours, different teams cite different numbers, and prioritization meetings become debates.

-

SEO exports performance data into spreadsheets.

-

Content team plans in docs/boards with no direct performance context.

-

Publishing happens in the CMS, but the “why” behind changes isn’t stored alongside the work.

What breaks: definitions drift (what counts as “a win”), task context gets lost, and recurring work (like refreshes) becomes reactive.

What got synchronized (data → content decisions → publishing)

The workflow shifts from “reporting as an event” to “operations as a system”:

-

Inputs: bring key SEO signals into the same operational environment where work is planned (not just reviewed).

-

Decisions: translate signals into a prioritized list (pages to refresh, topics to expand, internal links to add), with clear owners.

-

Publishing: connect the work to the CMS so changes are shipped consistently and can be audited later.

Go/Organic’s approach is to close this loop through the Connectivity Suite (connecting your stack) and an operating layer where workflows can be executed consistently—so “what we learned” becomes “what we shipped,” without fragile manual glue.

Example metrics to track (before/after): reporting lag, duplicate work, time-to-brief

Don’t start by chasing ranking promises. Start by proving the workflow is synchronized:

-

Reporting lag: how many days between a performance change and the team seeing it in a usable form?

-

Duplicate work rate: how often do multiple people analyze the same page/topic because information isn’t shared?

-

Time-to-brief: how long does it take to go from “we should update this” to a usable brief?

Case Example 2 — Faster content velocity without losing SEO control

Starting point: content team moves fast, SEO team can’t verify impact

Symptoms: content ships, but SEO feels like a bottleneck because it can’t validate basics at scale (metadata completeness, internal linking, on-page consistency). Content feels blocked; SEO feels ignored.

-

Ideas are generated quickly (often with AI), but briefs are inconsistent.

-

Drafts bounce between stakeholders because requirements aren’t standardized.

-

Publishing checklists are manual, so errors slip through (missing titles, thin intros, inconsistent headings).

Workflow change: idea → draft → visuals → publish with fewer handoffs

The goal isn’t “more output.” It’s fewer handoffs and repeatable QA:

-

Standardize the brief so each piece starts with the same minimum viable requirements (intent, target page, on-page must-haves, internal links to include).

-

Keep creation and operations connected so content, visuals, and publishing don’t happen in separate “worlds.”

-

Make publishing a workflow instead of an ad-hoc checklist.

In Go/Organic terms, this is where a coordinated system matters: a Content Engine to structure content operations, a Visual Operations Suite to keep visual production aligned, and a Publishing Engine to reduce friction between “ready” and “live.” The point is not any single module—it’s that the workflow is designed to move from decision to publish without losing context.

Example metrics: time-to-publish, revision cycles, % of pages published with complete metadata

-

Time-to-publish: median days from approved idea to live page.

-

Revision cycles: how many back-and-forth rounds per piece (and why)?

-

Metadata completeness rate: % of published pages that ship with agreed essentials (title, meta description, headings, internal links).

Case Example 3 — Turning AI insights into measurable outcomes (not just outputs)

Starting point: AI generates content, but performance feedback is disconnected

Symptoms: AI speeds up drafting, but the team can’t tell what to refresh, what to prune, or which formats work. AI becomes a content factory with no compass.

-

AI suggestions are created without a consistent feedback loop from performance data.

-

Pages get published, but measurement is delayed or too high-level to guide next actions.

-

Teams over-index on output volume because it’s the easiest thing to count.

Workflow change: connect performance signals back to operations decisions

The fix is to treat AI as part of a learning system:

-

Define a small set of performance signals you trust (visibility, clicks, crawl/indexation health, and where relevant, commerce proxies).

-

Turn those signals into repeatable triggers (e.g., “refresh pages with declining clicks” or “expand pages with impressions but low CTR”).

-

Operationalize the response so the trigger creates work that can be shipped and audited.

That’s what an SEO Operating System is meant to do: reduce the time between “we learned something” and “we shipped the fix.” In Go/Organic language, this is where a velocity layer (e.g., Velocity Engine) supports consistent throughput—but only when connected to publishing and measurement.

Example metrics: refresh cadence, pages improved per month, time-to-diagnosis

-

Refresh cadence: how often key pages get meaningful updates (not just minor edits).

-

Pages improved per month: count of pages that receive tracked, substantive upgrades tied to a measurable hypothesis.

-

Time-to-diagnosis: time from performance drop to root-cause hypothesis and an assigned fix.

CTA: If you’re evaluating whether to keep a fragmented stack or consolidate into a system, use this page to pressure-test your options: Compare an SEO OS vs separate tools for syncing data + content.

What to compare when choosing tools vs an SEO Operating System

The decision isn’t “platform vs tools.” It’s whether your setup can reliably close the loop from insight to action to measurement. This SEO Operating System vs separate SEO tools comparison goes deeper; use the checklist below as your quick filter.

Comparison checklist: integration depth, workflow automation, measurement/ROI linkage

-

Integration depth: Are connections robust enough to reduce manual exports, or do you still rebuild context by hand?

-

Workflow automation: Can you standardize and repeat your core workflows (brief → draft → publish → measure), or is every project a custom process?

-

Measurement linkage: Can you tie specific operational actions to outcomes (even imperfectly), or do you only have aggregate dashboards?

-

Governance: Can you audit what changed, who changed it, and why?

-

Adoption: Will content, SEO, and ops teams actually use the system daily—or will it become “a reporting tool” that no one trusts?

Red flags: “AI content” without publishing + measurement, dashboards without operational actions

-

AI output without operational closure: generating drafts faster doesn’t help if publishing is still slow or QA is still manual.

-

Dashboards without actions: if insights don’t create prioritized work, you haven’t reduced the Operations Gap.

-

Exports as the default: if every weekly cycle starts with CSVs, the system is fragile and won’t scale with velocity.

Practical next step: pick one workflow and run a 2-week synchronization test

Minimal test plan: connect sources, define one KPI, ship 5–10 pages, review outcomes

-

Pick one workflow (refreshes, new content, or technical fixes). Keep it narrow.

-

Connect the minimum sources needed to run end-to-end: publishing system + one trusted search signal source (and commerce signals if relevant).

-

Define one operational KPI (e.g., time-to-brief or time-to-publish) and one outcome KPI (e.g., clicks to the updated pages).

-

Ship 5–10 pages using the same standardized steps each time.

-

Review after 2 weeks:

-

Did reporting lag shrink?

-

Did revision cycles drop?

-

Can you clearly explain what changed and what happened after?

-

When it’s time to consolidate (signals you’ve outgrown a patchwork stack)

-

You spend more time moving information than making decisions.

-

You can’t confidently answer: “What did we ship last month, and what did it do?”

-

Your content velocity increases but quality/SEO consistency decreases.

-

AI speeds up drafting, but overall cycle time doesn’t improve.

If those sound familiar, you’re not lacking tools—you’re lacking an operating system that keeps work and results connected.

CTA: If you’re planning consolidation and need to align stakeholders, start here: See pricing to unify your stack and close the Operations Gap.

FAQ

What does it mean to synchronize SEO data, content, and AI insights?

It means performance signals (rankings, clicks, indexation, revenue proxies) reliably inform what content gets created/updated, and those actions are published and measured in one connected workflow—without manual exports, duplicate spreadsheets, or broken handoffs.

Why do “best-of-breed” stacks still feel slow?

Because the bottleneck is usually operational: disconnected tools create manual steps, inconsistent definitions, and delayed feedback loops. Even strong point tools can’t close the Operations Gap if data, content production, publishing, and measurement live in separate systems.

What metrics prove synchronization is working?

Look for operational and outcome metrics: time-to-brief, time-to-publish, reporting lag (days to insight), revision cycles, % of pages shipped with complete metadata, and time-to-diagnosis for performance drops. Then tie those to organic outcomes over time.

Do I need every integration connected to start?

No. Start with the highest-friction handoff (often CMS publishing + a webmaster tool signal). Prove a single workflow end-to-end, then expand connections once you’ve validated the time savings and measurement clarity.

How is an SEO Operating System different from an SEO tool?

An SEO OS is designed to unify the stack and automate the workflow from operations actions to measurable results—rather than optimizing one slice (research, reporting, or content) in isolation.