SEO Operations Best Practices for 2026 (Framework)

Best Practices for SEO Operations Management in 2026: A Practical Framework + Templates

In 2026, “doing SEO” isn’t the hard part—running SEO is. The teams that win aren’t only better at keyword research or content; they operate with a repeatable management system that turns ideas into shipped work, ties execution to outcomes, and protects quality at scale.

This article gives you a 2026-ready operating model (stack + workflow + measurement), plus copy/paste templates you can implement immediately. If you want the deeper hub that connects roles, rituals, and KPI governance into one system, start with our SEO Operations Playbook for teams and KPIs.

What “SEO operations management” means in 2026 (and what changed)

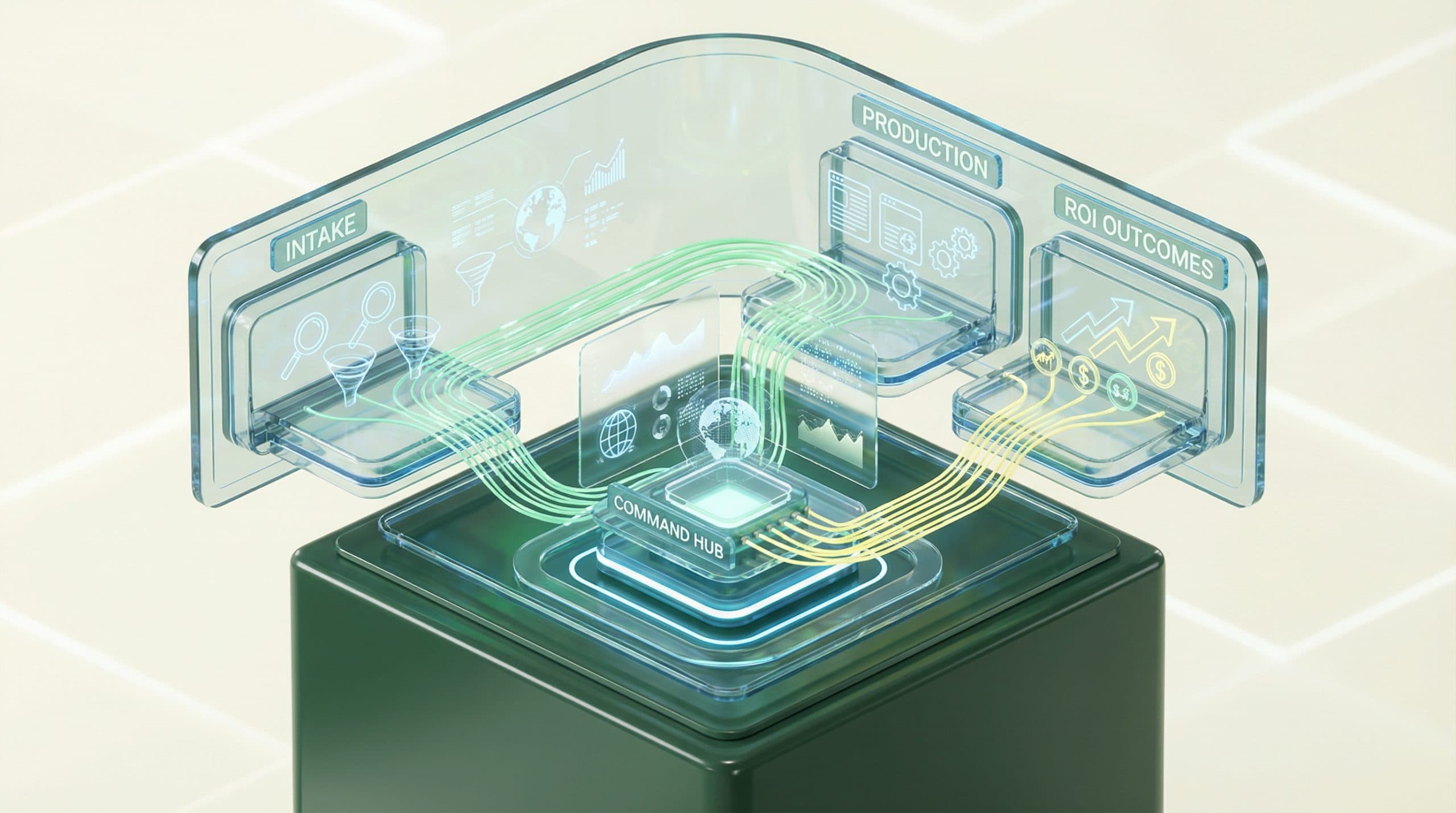

SEO operations management is the operating system behind your SEO strategy: the people, processes, governance, and measurement that move work from intake → prioritization → production → publish → learn → iterate. It’s how you make SEO predictable and scalable.

The Operations Gap: where speed and ROI get lost

Most SEO programs fail in the same place: between planning and results. That gap shows up as:

-

Slow cycle time (weeks between brief and publish)

-

Rework loops (unclear requirements, inconsistent QA, shifting approvals)

-

Measurement lag (reporting is late, inconsistent, or not tied to revenue)

-

Fragmented ownership (nobody owns the end-to-end outcome)

In 2026, this “Operations Gap” is costly because competitors can ship more, learn faster, and compound improvements.

2026 realities: AI-assisted production, higher quality bars, and measurement scrutiny

Trends we see reshaping SEO operations (and the operational implications):

-

AI-assisted production is normal → you need governance: sourcing, review standards, brand voice, and change logs.

-

Quality thresholds are higher → QA can’t be optional; it must be designed into the workflow with clear acceptance criteria.

-

Measurement is scrutinized → teams must connect operational inputs (what you ship) to outcomes (rank/traffic) and business impact (leads/revenue).

The 2026 SEO Operations Management Framework (3 layers)

Strong SEO ops is not a tool list—it’s three layers that reinforce each other.

Layer 1 — Unify your stack (single source of truth)

Your SEO program needs a shared “source of truth” for:

-

Inventory: every URL you care about (type, intent, status, owner, last updated)

-

Work: what’s in the pipeline, what’s blocked, what shipped

-

Performance: leading + lagging metrics tied to specific initiatives

Best practice: define one canonical dataset (even if it’s simple at first) and ensure every meeting and decision references it.

Layer 2 — Automate your workflow (idea → publish with governance)

In 2026, speed comes from reducing manual coordination, not from writing faster. The workflow should include:

-

Intake with standardized briefs and constraints

-

Prioritization with transparent scoring

-

Production with SOPs, QA gates, and versioning

-

Publishing handoffs that look like release management (checklists, sign-offs, rollback plan when needed)

Layer 3 — Measure what matters (ops actions → outcomes → ROI)

Operational improvements only stick when you can prove they work. That means measuring:

-

Inputs (hours, items, refreshes, experiments)

-

Throughput (cycle time, velocity, QA pass rate)

-

Outcomes (rank distribution, CTR, conversions)

-

Business impact (qualified leads, revenue contribution where measurable)

Best practice: assign owners and review cadence for every KPI. If nobody owns it, it won’t be managed.

Best practices by operating area (what “good” looks like)

Intake & prioritization (demand shaping + scoring)

High-functioning SEO teams don’t accept random requests—they shape demand. “Good” looks like:

-

One intake path (no side channels that bypass prioritization)

-

Clear request types (new content, refresh, technical fix, internal link sprint, experiment)

-

Explicit constraints (compliance rules, brand requirements, SMEs available, deadlines)

-

Scoring model that makes trade-offs visible

Operational tip: treat prioritization as a product backlog. If it’s not scored, it’s not scheduled.

Planning & production (briefs, QA, approvals, versioning)

In 2026, speed comes from repeatability. “Good” looks like:

-

Brief templates with a definition of done

-

Editorial + SEO QA gates before publish (not after)

-

Approval policy (what requires legal/brand review vs. what can ship)

-

Versioning for refreshes (what changed, why, and expected impact)

Publishing & technical handoffs (release management for SEO)

SEO work often fails at the handoff to web teams. “Good” looks like:

-

Standard tickets for technical changes (acceptance criteria, screenshots, test plan)

-

Release windows and owners for deploys

-

Rollback awareness for changes that can harm templates or structured data

-

Post-release verification (indexing signals, rendering check, internal links, canonicals)

Measurement & reporting (leading vs lagging indicators)

Teams get stuck when they only measure lagging outcomes (traffic, rankings) and ignore what they can control weekly. “Good” looks like:

-

Leading indicators reviewed weekly (cycle time, QA pass rate, refresh rate)

-

Outcome indicators reviewed monthly (rank distribution, CTR, conversions)

-

Initiative tagging so performance changes map back to shipped work

Governance & risk (brand, compliance, AI usage, content integrity)

AI-assisted workflows raise governance requirements. “Good” looks like:

-

AI usage policy (allowed tasks, disclosure rules if applicable, prohibited content)

-

Citation/source standards (what claims require sources and how they’re verified)

-

Content integrity checks (plagiarism risk, factual review, brand voice)

-

Audit trail for changes on high-stakes pages (pricing, legal, YMYL topics)

Team model for 2026: roles, responsibilities, and decision rights

Recommended roles (Head of SEO/Growth, SEO Ops, Content Ops, Analyst, Eng liaison)

You don’t need a massive org chart, but you do need clear decision rights. Common 2026 model:

-

Head of SEO/Growth: owns strategy, portfolio allocation, exec reporting

-

SEO Ops Lead: owns intake, prioritization system, workflow health, cadence

-

Content Ops / Managing Editor: owns briefs, production SOPs, editorial QA

-

SEO Analyst: owns measurement design, dashboards, experiment readouts

-

Engineering Liaison: owns technical backlog translation and release coordination

If you can’t hire all of these, assign them as explicit functions with named owners.

RACI: who owns strategy, execution, QA, and reporting

Use a simple RACI to stop work from bouncing between teams.

-

Strategy / roadmap: R = Head of SEO/Growth, A = Head of SEO/Growth, C = Analyst/Content Ops, I = Eng liaison

-

Intake / prioritization: R = SEO Ops, A = Head of SEO/Growth, C = Content Ops/Analyst, I = Stakeholders

-

Brief + production: R = Content Ops, A = Head of SEO/Growth (or Content lead), C = SEO Ops/SMEs, I = Stakeholders

-

SEO QA: R = SEO Ops, A = Head of SEO/Growth, C = Content Ops, I = Publisher

-

Reporting / KPI hygiene: R = Analyst, A = Head of SEO/Growth, C = SEO Ops, I = Exec team

KPI system: the metrics that actually manage SEO operations

KPI tree template (inputs → throughput → outcomes → business impact)

Copy/paste KPI tree (edit to fit your business):

-

Inputs

-

Content briefs created/week

-

Refreshes initiated/week

-

Technical tickets submitted/week

-

-

Throughput (Ops)

-

Cycle time (brief → publish)

-

Throughput (items shipped/week)

-

QA pass rate (% passed first time)

-

Rework rate (% requiring re-approval)

-

Blocker time (days blocked per item)

-

-

Outcomes (SEO)

-

Rank distribution (top 3 / top 10 / top 20 counts)

-

CTR by query group / page type

-

Organic sessions to priority pages

-

Conversions (leads/signups) from organic to priority pages

-

-

Business impact

-

Qualified pipeline influenced by organic (where measurable)

-

Revenue contribution / CAC efficiency (where measurable)

-

Core operational KPIs (velocity, cycle time, QA pass rate, refresh rate)

-

Cycle time: median days from approved brief to published

-

Velocity: items shipped per week by type (new, refresh, tech)

-

QA pass rate: % that pass on-page + editorial QA on first submission

-

Refresh rate: % of priority URLs refreshed on a defined cadence

Outcome KPIs (rank distribution, CTR, conversions, revenue contribution)

-

Rank distribution instead of averages (more stable for management)

-

CTR by intent cluster (signals snippet/title/meta alignment)

-

Conversions tied to priority pages and query groups

-

Revenue contribution only where your attribution model supports it (avoid false precision)

Cadence & rituals: the meeting system that keeps SEO moving

Rituals aren’t bureaucracy; they’re how you reduce coordination cost.

Weekly: pipeline review + blockers

-

Review WIP limits and what ships this week

-

Escalate blockers (SME delays, dev bandwidth, approvals)

-

Check leading indicators (cycle time, QA pass rate)

Biweekly: experiment review + learnings

-

Review tests (titles, internal linking patterns, template changes)

-

Decide: roll out, iterate, or stop

-

Update SOPs based on learnings

Monthly: KPI review + roadmap reallocation

-

Review KPI tree and outcomes by initiative

-

Reallocate roadmap (double down, deprioritize, resolve constraints)

-

Align with growth goals and seasonality

Quarterly: strategy reset + content/tech portfolio review

-

Re-validate target categories, segments, and intent coverage

-

Audit content portfolio (keep/refresh/merge/retire)

-

Review technical debt that blocks publishing velocity

Templates you can copy (framework + template section)

These templates are intentionally “tool-agnostic.” Implement them in a doc, spreadsheet, or your workflow system.

Template 1 — SEO work intake form (brief + constraints + success metrics)

-

Request type: New / Refresh / Technical / Experiment / Internal links

-

Target URL (if refresh):

-

Topic + intent: (what user is trying to do)

-

Primary keyword:

-

Secondary queries/entities:

-

Audience: (who and what stage)

-

Constraints: legal/claims, brand, SME availability, required sources

-

Definition of done: (QA gates, internal links, visuals, schema placeholder)

-

Success metrics: leading + outcome KPI targets

-

Owner + due date:

Template 2 — Prioritization scorecard (impact, effort, confidence, risk)

Score each item 1–5, then compute: (Impact × Confidence) / (Effort × Risk)

-

Impact: expected outcome magnitude

-

Confidence: strength of evidence (data, prior tests, demand)

-

Effort: time/cost including approvals and dependencies

-

Risk: brand/compliance risk, technical risk, reversibility

Template 3 — SEO production SOP checklist (research → draft → visuals → publish)

-

Research: intent, SERP patterns, internal coverage, SME inputs

-

Outline: sections mapped to intent + objections

-

Draft: clear claims, sources where needed, unique examples

-

On-page: title/H1 alignment, headings, internal links, FAQs if relevant

-

Visuals: diagrams, screenshots, tables (with alt text)

-

Compliance/brand review: if required

-

Publish prep: URL, meta, schema placeholder, canonical check

-

Launch: indexing request if applicable, distribution plan

-

Post-publish: QA verification + baseline metrics logged

Template 4 — SEO QA checklist (on-page, internal links, schema placeholder, accessibility)

-

Search intent match: page answers the job-to-be-done early

-

Title/H1: aligned, non-duplicative, readable

-

Headings: logical hierarchy, scannable

-

Internal links: to relevant cluster/pillar pages, descriptive anchors

-

Schema placeholder: plan/type documented if applicable (don’t add blindly)

-

Accessibility: alt text, contrast, link text clarity

-

Technical basics: canonical, indexability, no accidental noindex, correct status code

Template 5 — KPI dashboard spec (what to track, how often, owners)

-

Section A: Ops health (weekly): cycle time, throughput, QA pass rate, blocker time

-

Section B: Portfolio outcomes (monthly): rank distribution, CTR by cluster, conversions by priority page set

-

Section C: Initiative tracking: shipped items mapped to expected outcomes

-

Owners: each KPI has an owner + definition + review meeting

-

Latency: how fresh each metric is (so decisions are honest)

Evaluation checklist: how to audit your current SEO operations in 60 minutes

Stack audit (data sources, CMS, workflow tooling, handoffs)

-

Do we have a single list of priority URLs with owners and last-updated dates?

-

Where does work live (docs, tickets, spreadsheets), and what’s the “system of record”?

-

How many handoffs are required to publish? (List them.)

-

Is performance data tied to initiatives, or just reported in aggregate?

Workflow audit (cycle time, rework loops, bottlenecks)

-

Measure median cycle time for the last 10 shipped items.

-

Where does work wait the longest (SME, approvals, dev, QA)?

-

What % of items fail QA and need rework?

-

Are briefs standardized, or does every writer interpret requirements differently?

Measurement audit (attribution, reporting latency, KPI clarity)

-

Can we point to 3 initiatives and show what changed and what results followed?

-

Do we track leading indicators weekly?

-

Are KPI definitions written down (formula, source, owner, cadence)?

-

How long does monthly reporting take?

Implementation roadmap (30/60/90 days)

Days 1–30: standardize intake + KPIs + cadence

-

Stand up the intake form and prioritization scorecard

-

Define your KPI tree and owners

-

Install weekly pipeline + monthly KPI rituals

-

Create a priority URL inventory and refresh policy

Days 31–60: unify data + reduce cycle time

-

Choose the single source of truth for inventory + pipeline

-

Implement WIP limits and QA gates

-

Reduce rework by tightening “definition of done”

-

Improve handoffs to publishing/engineering with standardized tickets

Days 61–90: automate repeatable steps + scale publishing

-

Automate repeatable workflow steps (status, assignments, QA checks)

-

Tag initiatives so measurement connects to shipped work

-

Expand refresh and internal linking motions for compounding gains

-

Document governance for AI-assisted production (policy + review steps)

If you want the fastest path to install this operating model with accountability, consider a 30-day SEO operations pilot to close the operations gap. It’s designed for teams that need execution discipline, clearer KPIs, and a working cadence—without spending a quarter “getting organized.”

CTA: Book a 30-day pilot to install the 2026 SEO ops framework

When to bring in a system (and what to look for)

Signs you’ve outgrown spreadsheets and ad-hoc processes

-

Your pipeline is unclear (or lives in too many places)

-

Publishing is bottlenecked by handoffs and approvals

-

Reporting takes too long and isn’t trusted

-

Quality is inconsistent across writers/vendors

-

You can’t reliably answer: “What did we ship, and what did it change?”

Buying criteria: connectivity, workflow automation, measurement to ROI

When evaluating an SEO operations system, prioritize:

-

Connectivity: can it reliably pull/push the data you need? (For example, Go/Organic supports connected workflows with WordPress, WooCommerce, and Bing Webmaster Tools; for other tools, confirm availability before assuming.)

-

Workflow automation: can it standardize intake → production → QA → publish with governance?

-

Measurement that management can use: can it connect operational actions to outcomes and an ROI narrative?

-

Governance: roles, permissions, and audit trails that match your risk level

For teams aiming to unify stack + workflow + measurement in one place, see Go/Organic’s SEO Operating System for unifying workflow, publishing, and measurement (review it like you would any operating platform: against your process, your KPIs, and your governance needs).

CTA: See how the SEO Operating System unifies stack + workflow + ROI reporting

Next step: close the Operations Gap with a pilot or an operating system

You don’t need more SEO ideas—you need an operating model that ships high-quality work quickly and proves impact. Start by standardizing intake, installing KPI ownership, and running a cadence that surfaces blockers early.

Option A: 30-day pilot to install the operating model

If you want to implement the framework fast (and stop losing weeks to coordination and rework), explore the 30-day SEO operations pilot to close the operations gap.

Option B: adopt an SEO Operating System to unify stack + workflow + measurement

If your main constraint is system-level fragmentation—workflow spread across docs, tickets, and dashboards—consider Go/Organic’s SEO Operating System for unifying workflow, publishing, and measurement as the execution layer to reduce manual overhead and reporting lag.

FAQ

What are the biggest SEO operations changes teams need for 2026?

Teams need tighter governance for AI-assisted production, faster cycle times from idea to publish, and clearer measurement that ties operational inputs (throughput, QA, refreshes) to outcomes (rank/traffic) and business impact (leads/revenue). The operational model matters as much as the strategy.

Which KPIs best measure SEO operations management (not just SEO performance)?

Operational KPIs include cycle time (brief → publish), throughput (items shipped/week), rework rate (QA failures), refresh cadence, and blocker time. Pair these with outcome KPIs (rank distribution, CTR, conversions) so you can see how operational improvements change results.

How do I structure an SEO ops team if I can’t hire a dedicated SEO Ops manager?

Assign SEO ops ownership as a part-time function with explicit decision rights: one person owns intake/prioritization, one owns production SOP/QA, and one owns reporting/KPI hygiene. Use a simple RACI so strategy, execution, and measurement don’t collapse into one role.

What’s the difference between an SEO playbook and an SEO operating system?

A playbook documents how you work (roles, SOPs, KPIs, cadence). An operating system is the execution layer that helps unify data sources, automate repeatable workflow steps, and connect actions to measurable outcomes—reducing manual effort and reporting lag.

How can I audit my SEO operations quickly?

In 60 minutes, map your workflow from intake to publish, list every handoff, and measure where work waits. Then review your reporting: what data sources you rely on, how long reporting takes, and whether KPIs are defined with owners and review cadence. The biggest gaps usually show up as unclear prioritization, repeated QA loops, and delayed measurement.