SEO Operations Automation Strategy (Case Examples)

“

SEO Operations Automation Strategy: 3 Case Examples + a Repeatable Playbook for Faster Output and Clearer ROI

Most SEO teams don’t have an “ideas problem.” They have an execution problem: too many manual handoffs between data → decisions → content → visuals → publishing → measurement. The result is what we call the Operations Gap: work moves slowly, priorities drift, and ROI becomes hard to defend.

An effective SEO operations automation strategy closes that gap by standardizing the handoffs and automating the repeatable steps—without sacrificing editorial judgment. If you want the broader operating model (roles, RACI, KPI ownership, and team design) that this strategy plugs into, start with the SEO Operations Playbook for teams and KPIs.

Below is a proof-driven strategy with KPI definitions, a “where to automate first” map, three realistic case examples (before → move → after → metrics), and a 30-day rollout plan you can run as a low-risk pilot.

What an SEO operations automation strategy actually is (and what it isn’t)

The villain: the Operations Gap (manual handoffs, disconnected tools, data silos)

The Operations Gap shows up when the work system can’t keep up with the strategy. Common symptoms:

-

Manual triage: keyword/content requests live across spreadsheets, docs, Slack threads, and tickets.

-

Disconnected stages: research happens in one place, briefs in another, drafts in another, images in another, CMS formatting in another.

-

Copy/paste publishing: uploads, internal links, metadata, schema, and formatting are repetitive and error-prone.

-

Reporting theater: monthly decks with inconsistent definitions and unclear ownership; decisions lag the data.

The goal: predictable velocity + measurable outcomes (not “more tools”)

An SEO operations automation strategy is not “add automation everywhere.” It’s a system for:

-

Predictable velocity: shorter cycle time from idea → publish, and higher throughput per week/month.

-

Reduced rework: fewer QA defects, fewer revisions caused by missing requirements, fewer broken handoffs.

-

Measurable outcomes: operational gains tied to leading indicators (visibility, content decay prevention) and business proxies (qualified sessions, conversions where available).

The test: if you can’t point to a small KPI set with owners and show before/after movement, you don’t have an automation strategy—you have tool activity.

The KPI set that proves automation is working

Automation only “counts” if it moves a KPI that matters. Use three KPI layers and make each metric owned (one owner, one definition, one cadence).

Velocity metrics (cycle time, time-in-stage, publish throughput)

-

Cycle time (idea → publish): median days from accepted request to live URL.

-

Time-in-stage: median time spent in Intake, Brief, Draft, Edit, Visuals, QA, Publish.

-

Throughput: URLs shipped per week per content lane (e.g., net-new articles vs. refreshes).

How to measure: timestamp each stage change. If you don’t have stage timestamps, start by logging them in your tracker for 2 weeks to build a baseline.

Quality metrics (rework rate, QA defects, content decay signals)

-

Rework rate: % of items that move backward a stage (e.g., Edit → Draft) or require a second revision cycle.

-

QA defects per URL: missing metadata, broken links, formatting issues, missing image alt text, schema problems—tracked as count and severity.

-

Content decay signals: pages with declining impressions/clicks over a defined window; tied to a refresh queue.

How to measure: add a QA checklist with pass/fail items and log defects; for decay, use consistent time windows and page cohorts (e.g., top 100 non-brand landing pages).

Business metrics (pipeline/revenue proxy, ROI visibility, opportunity cost)

-

Qualified sessions / engaged sessions: pick one definition and keep it consistent.

-

Conversion proxy: leads, signups, add-to-carts, or downstream events where tracked.

-

ROI visibility: % of shipped work tied to a target KPI and a measurable hypothesis (e.g., “refresh decayed page → recover impressions”).

-

Opportunity cost: hours saved in production/reporting translated into capacity (e.g., “+4 refreshes/week”).

How to measure: attach each work item to one primary KPI and one expected leading indicator; review weekly rather than waiting for monthly rollups.

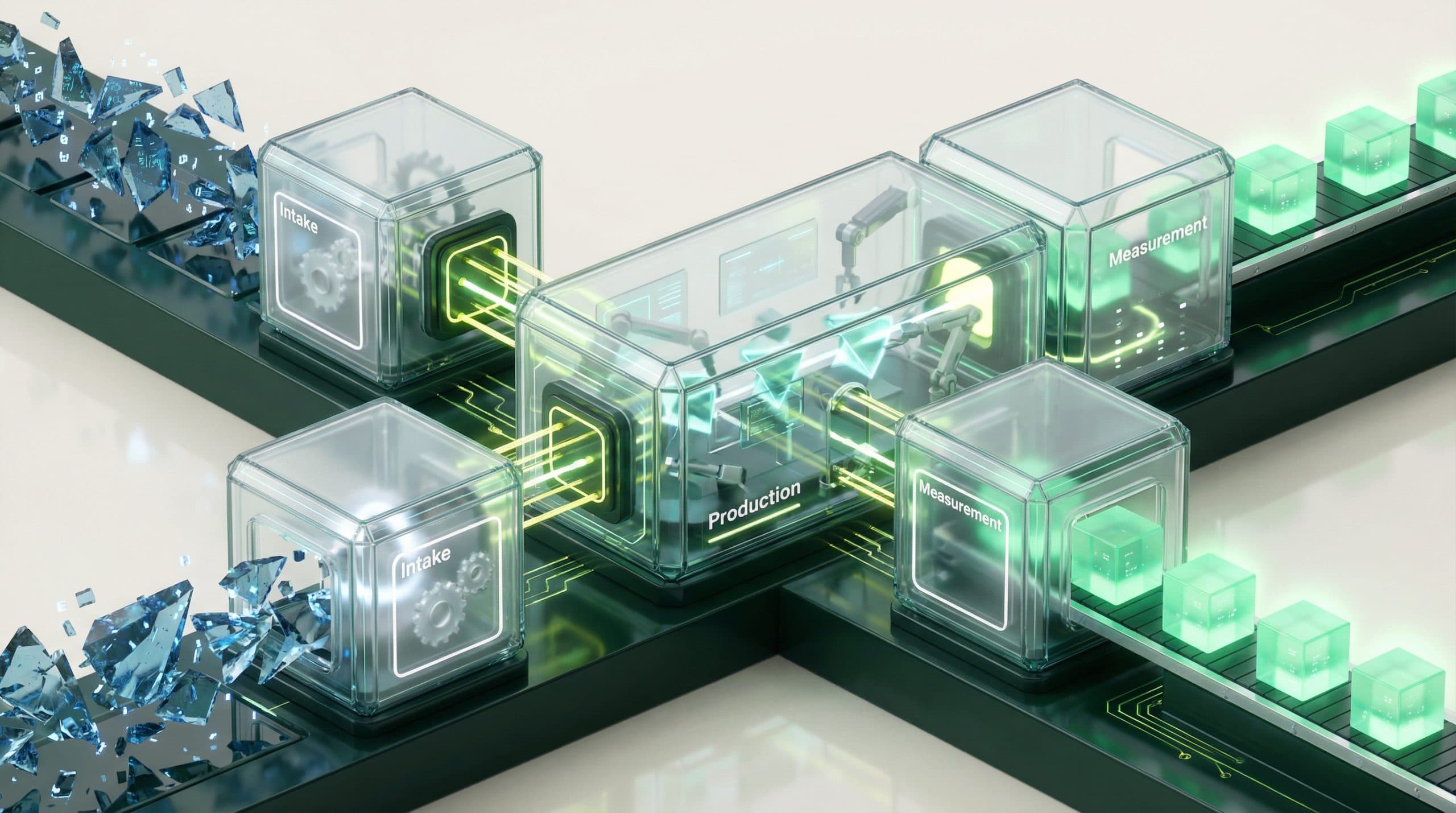

The automation map: where to automate first (highest leverage handoffs)

Don’t start by automating everything. Start with the handoffs that cause the most delay and rework. In most SEO teams, those are: (1) intake/prioritization, (2) production and publishing steps, and (3) measurement/reporting.

Unify your stack into a single source of truth (CMS + data sources)

Automation breaks when the system doesn’t know what’s true. Create one operational spine where each URL/work item has:

-

Owner (single accountable person)

-

Stage (and timestamps)

-

Primary KPI (what success means)

-

Inputs (data, brief, target query, internal link targets)

-

Outputs (published URL, change log, QA outcome)

If your CMS and data sources aren’t connected, you’ll keep paying the copy/paste tax. Prioritize connectivity that reduces manual extraction and manual publishing steps.

Automate workflow from idea → draft → visuals → publish

The production chain is where velocity is usually lost:

-

Briefs created from inconsistent templates

-

Drafting done in one place, then reformatted in another

-

Visuals sourced and resized manually

-

Publishing requires repeated field entry, internal links, and QA checks

Automate the repeatable parts (templated structure, formatting, visual generation/handling, and publishing steps) while keeping human QA gates for differentiation, accuracy, and brand voice.

Measure what matters with a unified dashboard (ops actions → outcomes)

You don’t need more dashboards. You need one view that connects:

-

Operational actions (what shipped, what changed, how long it took)

-

Leading indicators (impressions, clicks, decay recovery signals)

-

Business proxies (qualified sessions, conversions where available)

This is where ROI stops being a debate and becomes a recurring, inspectable system.

Case example #1 — From spreadsheet triage to automated opportunity intake

Before: keyword/content requests scattered across docs + Slack

A Head of SEO inherits a backlog: dozens of “we should write about X” notes, half-owned by nobody. Requests arrive through Slack, email, and meetings. The same keyword gets pitched twice. Priorities change weekly. The team feels busy, but output is uneven.

Automation move: standardized intake + auto-prioritization inputs

Build a single intake flow (form or standardized request template) that captures:

-

Target topic/query and page type (net-new vs. refresh)

-

Audience + intent + funnel stage

-

Primary KPI (visibility, decay recovery, conversion proxy)

-

Dependencies (SME review, dev, design)

-

Required fields (so items aren’t missing basics)

Then add prioritization inputs that update automatically where possible (e.g., opportunity score placeholders, decay flags, content inventory status). Even if scoring starts simple, consistency beats complexity.

Data to show: time-to-decision, backlog age, % work tied to a KPI

-

Time-to-decision: reduce from X days to Y days by enforcing required fields + weekly triage cadence.

-

Backlog age: reduce median “days sitting unassigned.”

-

% of work tied to a KPI: increase from X% to Y% (every item has one primary KPI owner).

Owner suggestion: SEO Ops Lead owns intake stage definitions; Head of SEO owns prioritization rules and weekly decision cadence.

Case example #2 — From multi-day production to “minutes-to-publish” velocity

Before: manual drafting, image sourcing, formatting, and CMS upload

A single article touches 5–8 tools. Drafts are written in docs, copy/pasted into the CMS, headings get restructured, internal links are added late, visuals are requested or sourced, and QA happens under deadline. Publishing day becomes a scramble.

Automation move: content generation + visual ops + 1-click publishing

Pick one content lane (e.g., refreshes, or net-new long-form) and standardize it end-to-end:

-

Content Engine: generate structured drafts from a consistent brief template (humans still own differentiation and final editorial decisions).

-

Visual Operations Suite: generate or manage repeatable visual needs (e.g., featured images, in-article visuals) with consistent sizing and naming rules.

-

Publishing Engine: push approved content into the CMS with fewer manual steps, supported by a QA gate.

-

Connectivity Suite: reduce manual data pull/push where you can by connecting the system to your publishing and measurement environment (connectivity depends on your stack).

When teams describe “minutes-to-publish,” they usually mean the publishing step goes from hours to minutes because formatting, visual placement, and CMS entry aren’t manual anymore—and because QA is a checklist-driven gate, not a last-minute scramble.

If your goal is to unify these handoffs (data, content, visuals, publishing) into an operational spine, this is where an operating system approach helps. For example, the Go/Organic SEO Operating System is designed around those pillars—connectivity, content, visuals, publishing—so you can reduce manual handoffs without turning SEO into a “tool sprawl” problem.

Quality guardrail: Automate repeatable steps, not judgment. Keep human gates for factual accuracy, brand voice, differentiation, and on-page standards. Track rework rate to prove quality is holding while velocity improves.

Data to show: cycle time reduction, throughput increase, QA defect rate

-

Cycle time: reduce idea → publish by X–Y% for the pilot lane.

-

Throughput: increase weekly URLs shipped by X–Y% without increasing headcount.

-

QA defects: reduce defects per URL (or severity-weighted defects) by X–Y% via checklist gates + standardized publishing.

Secondary CTA:

See how the SEO Operating System unifies data, content, and publishing

Case example #3 — From reporting theater to ROI-connected operations

Before: monthly reporting, inconsistent definitions, unclear attribution

The team spends days building a deck. Definitions vary (what counts as “published,” what counts as “optimized,” what time window matters). Attribution debates dominate. By the time the report is done, the insights are stale.

Automation move: unified dashboard that connects ops actions to outcomes

Replace “deck building” with a weekly operating cadence and a dashboard that answers:

-

What shipped? (URLs, lane, owner, timestamps, change type)

-

How did operations perform? (cycle time, time-in-stage, defects, rework)

-

What moved? (leading indicators like impressions/clicks; decay recovery cohorts)

-

What’s next? (backlog health, bottlenecks, owner-level commitments)

This turns reporting into an inspection system—where measurement is a byproduct of execution, not a separate monthly project.

Data to show: reporting hours saved, decision cadence, KPI ownership clarity

-

Reporting hours saved: reduce from X hours/month to Y hours/month by instrumenting stages and standardizing definitions.

-

Decision cadence: move from monthly to weekly decisions on backlog, refresh queue, and blockers.

-

KPI ownership clarity: every KPI has a single owner and a weekly review ritual.

The 30-day rollout plan (low-risk, proof-first)

This is a pilot designed to prove operational lift quickly. You’re not trying to “transform everything” in 30 days—you’re trying to baseline → automate a lane → show measurable movement.

Week 1: map stages + define KPIs + baseline current cycle time

-

Define your stages (Intake, Prioritize, Brief, Draft, Edit, Visuals, QA, Publish, Measure).

-

Assign an owner per stage and per KPI.

-

Baseline: median cycle time, time-in-stage, throughput, QA defects, rework rate.

Deliverable: one page of definitions + a baseline snapshot.

Week 2: connect CMS + available data sources; standardize intake

-

Implement a single intake template (required fields, KPI linkage, dependencies).

-

Connect what you can so work items and URLs aren’t tracked manually.

-

Create a basic prioritization rule (even if it’s simple scoring + human review).

Deliverable: a clean backlog with KPI tags and stage visibility.

Week 3: automate content + visuals + publishing for one content lane

-

Select one lane: e.g., “refresh decayed pages” or “net-new product education articles.”

-

Standardize the brief and on-page requirements.

-

Automate the repeatable production steps (draft structure, formatting rules, visuals workflow, publishing workflow).

-

Add QA gates and log defects.

Deliverable: first set of URLs shipped through the automated lane with QA logged.

Week 4: dashboard + review cadence; document SOPs and ownership

-

Launch a unified view that shows ops metrics + leading indicators.

-

Establish a weekly 30-minute operating review: bottlenecks, commitments, and KPI deltas.

-

Document SOPs: stage definitions, checklists, “definition of done.”

Deliverable: a repeatable operating cadence and a clear before/after story.

Baseline + target examples (use your numbers):

-

Cycle time: baseline X days → target X–Y% reduction

-

Throughput: baseline X URLs/week → target +X–Y%

-

QA defects: baseline X per URL → target -X–Y%

-

Reporting time: baseline X hrs/month → target -X–Y%

Primary CTA:

Book a 30-day pilot to prove cycle-time and throughput gains

If you want guided implementation with measurable outcomes (baseline, lane automation, KPI dashboard, and SOPs), a structured 30-day SEO operations automation pilot is often the fastest way to prove impact internally—without committing to a massive replatform or a long transformation program.

Common failure modes (and how to avoid them)

Automating chaos (no definitions, no owners, no QA gates)

If stages aren’t defined and no one owns each step, automation just accelerates confusion. Fix it by:

-

Writing a “definition of done” for each stage

-

Assigning one owner per stage

-

Adding QA gates (brief quality, factual checks, on-page standards, brand voice)

Measuring the wrong thing (output without outcomes)

Throughput alone can create busywork. Avoid this by:

-

Requiring every work item to have one primary KPI

-

Tracking both ops metrics (cycle time, defects) and leading indicators (impressions/clicks trends, decay recovery cohorts)

-

Reviewing weekly and adjusting the lane rules

Tool sprawl (more logins, less clarity)

Automation should reduce interfaces, not add them. Avoid this by:

-

Choosing a single system of record for stages, ownership, and KPI linkage

-

Connecting the CMS and available data sources to reduce copy/paste work

-

Standardizing templates so the process is teachable and auditable

What to do next: choose your pilot scope and prove impact

Pick a scope you can measure in 30 days:

-

Lane A (recommended): refresh decayed pages (clear before/after cohorts, faster feedback loops)

-

Lane B: net-new long-form articles (best if you have stable editorial capacity)

-

Lane C: programmatic publishing or templated pages (only if your QA and governance are strong)

Then run the proof loop:

-

Baseline cycle time, throughput, defects, and reporting hours.

-

Automate the highest-friction handoffs.

-

Ship through one lane with QA gates.

-

Review weekly, document SOPs, and scale what works.

FAQ

What should we automate first in SEO operations?

Start with the handoffs that create the most delay and rework: (1) opportunity intake/prioritization, (2) content production steps that repeat every time (drafting, formatting, visuals), and (3) publishing/QA. Automate only after you define stage owners and a small KPI set (cycle time, throughput, defect rate).

How do we prove an SEO operations automation strategy is working?

Use before/after baselines. Track cycle time (idea → publish), time-in-stage, weekly publish throughput, QA defects/rework rate, and reporting hours saved. Then connect those operational gains to outcomes (rankings/traffic leading indicators and business proxies like qualified sessions or conversions where available).

Will automation hurt content quality or make us sound generic?

It can if you automate without guardrails. Add QA gates (brief quality, factual checks, on-page standards, brand voice review) and measure rework rate. The goal is to automate repeatable steps so humans spend more time on strategy, differentiation, and editorial judgment.

Do we need to replace our current tools to automate SEO operations?

Not necessarily. The priority is reducing tool sprawl and creating a single source of truth for decisions and reporting. If your current stack can’t connect cleanly or forces manual copy/paste between stages, that’s when an operating system approach becomes valuable.

What’s a realistic timeline to see results from SEO ops automation?

Operational results can show up in weeks (faster cycle time, more throughput, fewer defects). SEO outcome signals take longer, but the strategy is to prove operational lift first, then compound it into consistent publishing and better measurement over 60–90 days.

“