SEO Operations ROI Metrics Framework + Template

“

SEO Operations ROI Metrics: A Practical Framework + Reporting Template for Proving Impact

“SEO ROI” usually gets framed as rankings, traffic, and revenue. But if you lead SEO in a growing org, you know the uncomfortable truth: the biggest wins often come from better operations—faster publishing, fewer handoffs, clearer ownership, and reporting that leadership trusts.

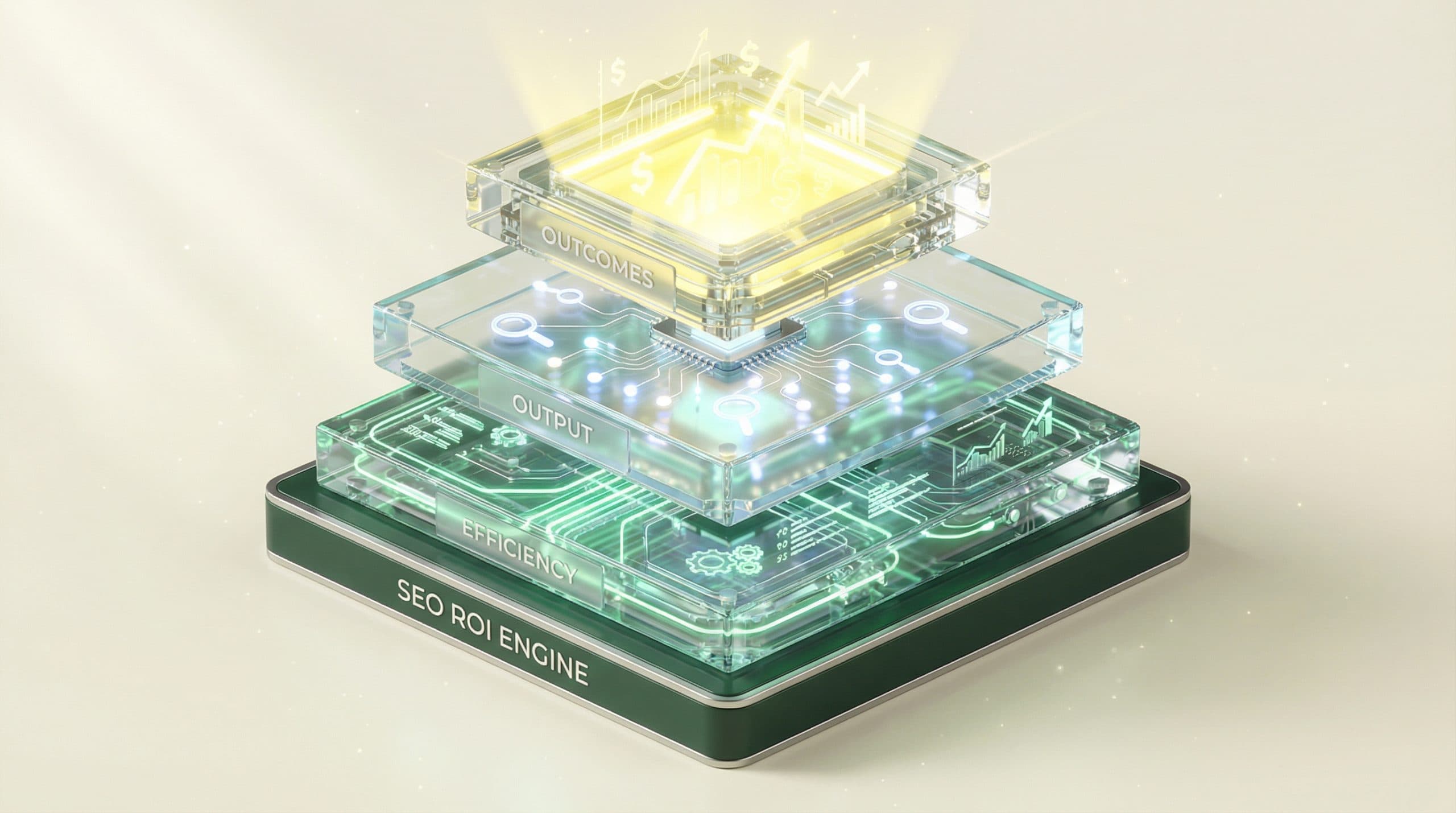

This guide gives you a practical, operations-first way to measure and report SEO operations ROI metrics using a 3-layer KPI framework (Efficiency → Output → Outcomes), plus a copy/paste reporting template you can implement in under an hour.

If you want the broader system—roles, workflows, and KPI ownership—use the SEO Operations Playbook for teams and KPI ownership as your hub and reference point.

What “SEO operations ROI” actually means (and why most teams can’t prove it)

SEO operations ROI is the measurable return you get from improving the system that produces SEO work: planning, production, QA, publishing, technical fixes, measurement, and continuous improvement.

Most teams struggle to prove it because they try to jump straight from “we shipped X pages” to “we made $Y.” In reality, SEO impact flows through leading indicators (coverage, indexation, CTR, non-brand visibility), and those indicators are heavily influenced by operational reliability.

The Operations Gap: disconnected tools, manual processes, and data silos

The Operations Gap shows up when:

-

Work is tracked in one place, content lives in another, and reporting lives in a third.

-

Cycle times are unknown (or vary wildly), so forecasting is guesswork.

-

Quality checks happen inconsistently, causing rework and indexation issues.

-

Dashboards emphasize outcomes without explaining the operational drivers.

When this gap exists, leadership doesn’t just question results—they question the repeatability of results.

ROI isn’t just rankings—operations ROI includes cost, speed, and reliability

Operations ROI includes:

-

Cost savings (hours reduced, fewer reworks, fewer emergencies)

-

Speed (shorter time-to-publish; faster fixes)

-

Reliability (consistent output and measurement you can defend)

-

Better outcomes driven by improved throughput and quality

The SEO Operations ROI Metrics Framework (3 layers)

Use three layers so you can answer executive questions without overclaiming attribution:

-

Layer 1: Operational Efficiency KPIs (inputs you control)

-

Layer 2: SEO Output KPIs (leading indicators)

-

Layer 3: Business Outcome KPIs (what leadership funds)

The key: tie each layer to an owner and a cadence so your reporting becomes a management system—not a monthly scramble.

Layer 1 — Operational Efficiency KPIs (inputs you control)

These metrics prove whether your SEO engine is getting faster, cheaper, and more predictable:

-

Cycle time (idea → published)

-

Throughput (publish volume)

-

Rework rate (quality + alignment)

-

Cost per published page (internal + external)

Layer 2 — SEO Output KPIs (leading indicators of impact)

These metrics show whether your operation is producing pages that can actually compete and get discovered:

-

Indexation rate

-

Content coverage vs. plan (topics/queries mapped)

-

Non-brand impressions and clicks

-

CTR lift on priority queries/pages

Layer 3 — Business Outcome KPIs (what leadership funds)

These are the metrics that earn continued investment:

-

Organic revenue (ecommerce) or organic pipeline influenced (B2B)

-

Assisted conversions where organic was part of the journey

-

CAC/LTV context (not always controllable by SEO, but critical for exec narrative)

-

Documented cost savings (from Layer 1)

KPI definitions + formulas (use these in your dashboard)

Below are defensible definitions you can standardize across teams. If you already track variants, keep the ones that match your internal finance and analytics conventions—consistency matters more than perfection.

Efficiency metrics (time-to-publish, cost per article, rework rate, throughput)

-

Time-to-publish (cycle time) = median days from “Brief approved” → “Published”

-

Throughput = # of pages published (by type) per week/month

-

Rework rate = (# of items sent back for revision ÷ # of items reviewed) × 100

-

Cost per published page = (content labor cost + editing + design + dev + tools allocation) ÷ # pages published

-

On-time delivery rate = (# published by target date ÷ # planned) × 100

Why these matter: They let you quantify operational improvements even when traffic lags (which it often does in SEO).

SEO output metrics (indexation rate, content coverage, CTR lift, non-brand impressions)

-

Indexation rate = (# target pages indexed ÷ # target pages published) × 100

-

Content coverage = (# mapped queries with a live, optimized page ÷ # mapped queries in plan) × 100

-

Non-brand impressions = total impressions excluding branded queries (use your defined brand filter)

-

CTR lift = (current CTR − baseline CTR) on the same query set/time window

-

Share of voice (simple) = % of priority queries where you rank in top X (e.g., top 10 or top 3)

Why these matter: They connect operational throughput and quality to search demand capture.

Outcome metrics (organic revenue, assisted conversions, pipeline influenced, CAC/LTV context)

-

Organic revenue = revenue where organic search is the attributed channel (per your analytics model)

-

Assisted conversions = conversions where organic appears in the path but is not the last touch

-

Pipeline influenced (B2B) = sum of opportunities where organic was a first touch or assisted touch (define rule)

-

Incremental organic value (model) = incremental organic sessions × conversion rate × value per conversion

-

Cost savings = hours saved × loaded hourly rate

Why these matter: They translate SEO operations improvements into financial language—revenue, pipeline, and cost.

How to attribute ROI without overpromising (a defensible evaluation model)

You don’t need perfect attribution to report credible ROI. You need a clear stance, consistent assumptions, and separation between what the SEO program achieved and what the SEO operation enabled.

Choose an attribution stance: direct, assisted, or blended

-

Direct-only: conservative; easiest to defend; often undercounts SEO.

-

Assisted: better reflects reality for many journeys; requires stakeholder education.

-

Blended: combine conservative outcome estimates with hard cost savings from efficiency.

For “SEO operations ROI,” a blended stance is usually the most defensible because it anchors on operational savings you can verify, then adds outcome estimates with documented assumptions.

Use holdouts and time windows where possible (and document assumptions)

If you can, use any of these:

-

Holdouts: delay publishing for a subset of comparable pages to estimate lift.

-

Before/after windows: compare performance after updates with a consistent lag window.

-

Matched comparisons: compare updated pages to a similar group not updated.

Always document:

-

Time window used (e.g., 28 days, 90 days)

-

Lag assumptions (SEO impact is not instantaneous)

-

What changed (content, internal links, technical fixes, templates)

-

Measurement model used (direct vs assisted)

Separate “SEO performance” from “SEO operations performance”

This separation prevents common reporting failures:

-

SEO performance answers: “Are we winning demand?” (Output + Outcomes)

-

SEO operations performance answers: “Can we reliably produce wins?” (Efficiency + Output enablers)

You can improve operations even when market demand, seasonality, or product conversion rates blur short-term outcomes.

The SEO Operations ROI Reporting Template (copy/paste structure)

Use this structure to build an ROI-ready scorecard that executives can scan quickly while giving your team operational levers to pull.

Section A — Weekly ops scorecard (velocity + quality)

-

Planned vs published: # planned, # published, on-time delivery rate

-

Cycle time: median days idea → publish (and by stage if you track it)

-

Throughput by type: net-new pages, refreshes, technical fixes shipped

-

Rework rate: % returned for revisions (brief, SEO QA, legal, dev)

-

Top bottleneck: one sentence (“editing queue” / “dev backlog”)

-

This week’s operational change: one change you made to reduce friction

Section B — Monthly SEO output scorecard (coverage + demand capture)

-

Indexation rate: published vs indexed (with notes on exceptions)

-

Non-brand impressions/clicks: total and for priority clusters

-

CTR: overall and priority query sets; note snippet/title changes

-

Content coverage: % of mapped queries with a live page

-

Top 5 wins / top 5 risks: pages or clusters with notable changes

Section C — Quarterly business outcomes (revenue/pipeline + cost savings)

-

Organic revenue or pipeline influenced: with attribution stance noted

-

Assisted conversions: trend and definition

-

Incremental value estimate: sessions lift × CVR × value (show assumptions)

-

Cost savings: hours saved × loaded rate (tie to operational improvements)

-

ROI summary: (incremental value + cost savings − program cost) ÷ program cost

Section D — Narrative: what changed, why it changed, what we’ll do next

Keep this short and repeatable:

-

What changed: (e.g., “Cycle time dropped 30% due to standardized briefs.”)

-

Why it changed: (the operational decision)

-

What it affected: (indexation, impressions, CTR, conversions)

-

What we’ll do next: the next constraint to remove

CTA: If you want help standing this up quickly (KPI definitions, cadence, and a single scorecard leadership trusts), consider a 30-day pilot to unify SEO operations and prove ROI fast.

Example: turning operational improvements into ROI math

Two ROI paths are usually available immediately: cost savings and incremental value. Use both, but label the second as an estimate with assumptions.

Cost savings example (hours saved × loaded rate)

Scenario: You reduce rework and handoffs by standardizing briefs and QA, saving 6 hours per page across writing, editing, and SEO QA.

-

Pages published per month: 20

-

Hours saved per page: 6

-

Loaded hourly rate (blended): $X

Monthly cost savings = 20 × 6 × $X = $120X

This is highly defensible if you document the “before” workflow, the “after” workflow, and the assumption behind the loaded rate.

Revenue/pipeline example (incremental organic sessions × conversion rate × value)

Scenario: Operational improvements increase throughput and quality, which improves coverage and lifts non-brand sessions over a defined window.

-

Incremental organic sessions (quarter): +S (vs baseline or matched set)

-

Organic conversion rate: CR

-

Value per conversion (revenue or qualified pipeline value): $V

Incremental value (estimate) = S × CR × $V

To keep this defensible, show the time window, lag, and whether S is total lift or lift attributed to a set of pages you shipped.

Implementation checklist (30–60 minutes to get your first version live)

Start small. A usable v1 beats a perfect dashboard that never ships.

Define KPI owners and cadence (weekly/monthly/quarterly)

-

Weekly: cycle time, throughput, on-time delivery, rework rate (owned by SEO ops lead)

-

Monthly: indexation, non-brand impressions/clicks, CTR, coverage (owned by SEO lead)

-

Quarterly: revenue/pipeline influenced + cost savings + ROI summary (owned by SEO + finance/analytics partner)

Unify your data sources into a single source of truth

-

Pick one place where KPI definitions live (a doc or dashboard notes section).

-

Pick one reporting artifact (one-page scorecard) that gets reused every cycle.

-

Map each KPI to a source and an owner (even if the first version is partly manual).

Automate the workflow so the metrics improve predictably

-

Standardize briefs and acceptance criteria to reduce rework.

-

Create a consistent QA checklist (SEO + editorial + technical).

-

Limit work-in-progress to reduce cycle time.

-

Track bottlenecks weekly and remove one constraint at a time.

When to escalate: thresholds that signal an operations problem (not a content problem)

Use threshold-based triggers so you don’t misdiagnose performance.

If velocity is down: bottleneck diagnosis

-

Cycle time up + throughput down → too many handoffs or unclear ownership

-

On-time rate down → planning mismatch, scope creep, or competing priorities

-

Rework rate up → unclear briefs, inconsistent QA, or misaligned expectations

Action: fix the process before changing the strategy.

If output is up but outcomes are flat: intent/offer/measurement diagnosis

-

Indexation low → technical/QA issues, internal linking, or quality signals

-

Impressions up but CTR flat → snippet/title misalignment or weak differentiation

-

Traffic up but conversions flat → intent mismatch, weak offer, or landing page friction

-

Outcomes unclear → attribution stance and reporting definitions need agreement

Action: separate “we shipped more” from “we shipped the right things” and ensure measurement assumptions are documented.

Next step: install a measurable SEO Operating System

Once your KPI layers are defined and your scorecard cadence is in place, the goal is simple: make operational improvements predictable so SEO outcomes become repeatable.

That’s the point of an operating system approach—connecting workflow, KPI ownership, and reporting so your team can close the Operations Gap and show ROI with confidence. If you’re evaluating a systemized way to do that, explore an SEO Operating System that connects workflow to measurable results.

Next step CTA: See how the SEO Operating System turns ops actions into ROI signals.

FAQs

What are the best SEO operations ROI metrics to start with?

Start with a small set across the three layers: (1) Efficiency: time-to-publish, throughput, cost per published page; (2) Output: indexation rate, non-brand impressions, CTR; (3) Outcomes: organic revenue (or pipeline influenced) plus documented cost savings from hours reduced.

How do I calculate ROI for SEO operations if attribution is messy?

Use a defensible blended model: quantify cost savings (hours saved × loaded rate) and pair it with incremental outcome estimates (e.g., incremental organic sessions × conversion rate × value). Document assumptions, time windows, and whether you’re reporting direct vs assisted impact.

What’s the difference between SEO ROI and SEO operations ROI?

SEO ROI focuses on results (traffic, leads, revenue). SEO operations ROI measures whether your system reliably produces those results—speed, cost, quality, and measurement integrity—so performance becomes repeatable rather than ad hoc.

Which KPIs should be weekly vs monthly vs quarterly?

Weekly: operational efficiency (cycle time, throughput, rework). Monthly: SEO outputs (indexation, impressions, CTR, content coverage). Quarterly: business outcomes (revenue/pipeline influenced, CAC/LTV context) and cumulative cost savings.

How do I present SEO operations ROI to executives?

Lead with outcomes and confidence: show the business metric trend, then the operational drivers that explain it (velocity, coverage, quality). Keep a one-page scorecard, include assumptions, and end with the next-quarter plan tied to measurable targets.

“