SEO Ops Best Practices for 2026 (Framework + Template)

SEO Ops Best Practices for 2026: A Practical Framework + Operating Template

In 2026, “best practices” for SEO aren’t primarily about finding a new trick—they’re about running SEO like an operating model: repeatable workflows, clear ownership, decision-ready measurement, and fast feedback loops. That’s what SEO operations (SEO ops) is: the system that turns SEO strategy into reliable shipping and measurable outcomes.

If you want the broader system—including how to structure teams, KPIs, and operating cadence across growth—start with our SEO Operations Playbook for teams and KPIs. This article is the implementable layer: a framework, a quick audit scorecard, and a copy/paste template you can install in weeks.

What “SEO ops” means in 2026 (and what changed)

SEO ops is the set of processes, roles, governance, and measurement that makes SEO execution predictable. In 2026, two shifts raise the bar:

-

Speed expectations increased. Teams need shorter content cycle times and faster iteration—without sacrificing brand and technical quality.

-

Traceability is mandatory. Leadership expects a credible line from work shipped → visibility gained → qualified traffic/conversions → revenue or pipeline contribution where applicable.

-

AI-assisted execution is normal. The differentiator isn’t “using AI”—it’s having controls, QA gates, and measurement so automation doesn’t create risk or noise.

The Operations Gap: why output ≠ outcomes

The Operations Gap is the distance between what a team produces (articles published, tickets closed) and what the business gets (qualified organic demand, conversions, pipeline). Many teams publish consistently but can’t answer:

-

Which work moved which KPI?

-

What should we do more of next month—based on evidence?

-

Where is cycle time getting stuck (briefing, editing, dev, publishing, indexing, measurement)?

Closing the gap requires an operating system: unified data + standardized workflow + clear ownership + KPIs that drive decisions.

The 2026 bar: speed, traceability, and ROI attribution

High-performing SEO programs in 2026 behave like product teams:

-

Short release cycles (weekly shipping) with explicit QA gates.

-

Instrumented work (every initiative mapped to leading and lagging KPIs).

-

Learning loops (monthly retro that changes the backlog).

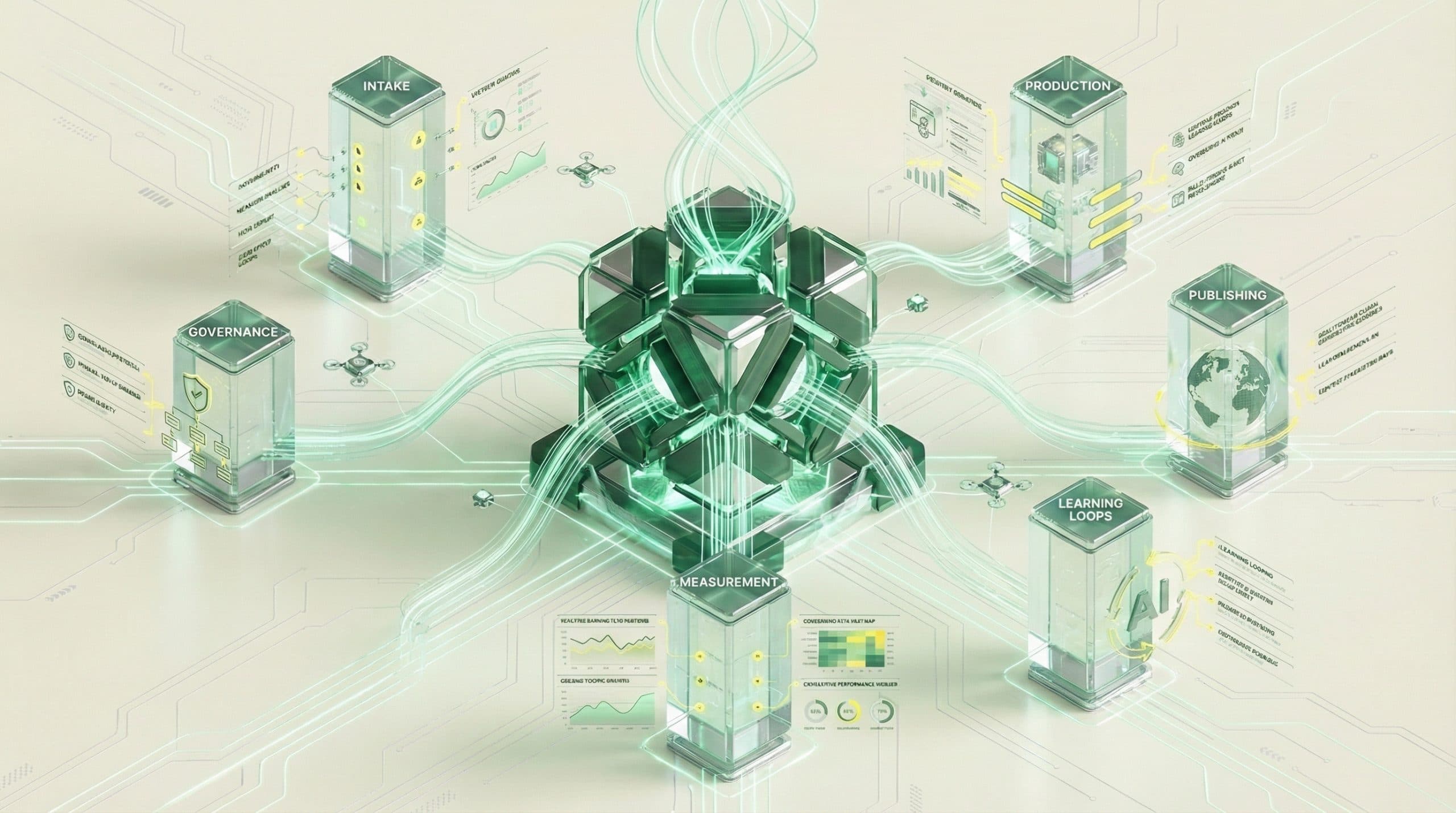

The 2026 SEO Ops Best Practices Framework (6 pillars)

Use these six pillars as your operating model. Each one is measurable, auditable, and installable.

1) Single source of truth for SEO data + decisions

Best practice: one place where the team can see what’s planned, what shipped, what changed, and what it did—without stitching together screenshots and spreadsheets.

-

Decisions are logged. Why was a topic chosen? What hypothesis are we testing?

-

URLs are traceable. Each page maps to an intent, a topic cluster, and a KPI target.

-

Inputs are consistent. The same definitions for “published,” “updated,” “indexed,” “conversion,” etc.

Outcome: Less debate, faster prioritization, and reporting that drives action.

2) Standardized workflow from idea → publish → measure

Best practice: every piece of work moves through the same stages with clear exit criteria. This makes cycle time visible and improvable.

-

Intake: where ideas come from and how they’re scored.

-

Brief: what “done” means before writing starts.

-

Production: writing, editing, visuals, on-page implementation.

-

Publish: metadata, internal links, schema, technical checks.

-

Measure: instrumentation, benchmarks, KPI review.

-

Refresh: scheduled updates based on decay and opportunity.

Outcome: Fewer handoff failures and less rework.

3) Clear roles, handoffs, and governance (RACI)

Best practice: define who is Responsible, Accountable, Consulted, and Informed for each workflow stage.

-

Prevents “everyone owns it” (meaning no one owns it).

-

Reduces bottlenecks (especially around editing, visuals, and dev).

-

Makes SLA expectations explicit (what turnaround time is acceptable).

Outcome: Predictable throughput and fewer missed deadlines.

4) KPI tree that connects work to revenue outcomes

Best practice: run SEO with a KPI tree (leading → mid → lagging indicators). Rankings alone aren’t decision-ready; they’re a symptom, not a system.

-

Leading: controllable execution metrics (cycle time, QA pass rate, publish velocity, refresh rate).

-

Mid: market feedback (impressions, CTR, non-branded rankings by topic, indexation coverage).

-

Lagging: business impact (qualified organic sessions, conversions, revenue/pipeline contribution where applicable).

Outcome: You can prove progress before revenue shows up—and diagnose what’s broken when it doesn’t.

5) Cadence: weekly execution + monthly learning loops

Best practice: separate “shipping” from “learning,” and keep both sacred.

-

Weekly: backlog grooming, blockers, publish plan, QA readiness, internal linking/updates.

-

Monthly: performance review by topic cluster, refresh plan, experiments, and reprioritization.

Outcome: Less thrash. More compounding.

6) Quality control: content, technical, and brand safeguards

Best practice: quality is a defined gate, not a subjective hope.

-

Content QA: intent match, completeness, originality, internal link targets, factual checks.

-

Technical QA: indexability, canonicals, performance basics, structured data where appropriate.

-

Brand QA: voice, claims, compliance, and consistency with positioning.

Outcome: Fewer post-publish emergencies and less reputational risk.

Evaluation scorecard: how to audit your SEO operations in 30 minutes

Score each pillar 0–3. The goal isn’t perfection—it’s identifying where ops debt is hiding and what to fix first.

Maturity levels (0–3) for each pillar

-

0 — Ad hoc: work happens, but it’s inconsistent and hard to explain.

-

1 — Documented: you have a process, but compliance is uneven and measurement is messy.

-

2 — Managed: workflow and ownership are consistent; KPIs are reviewed on a cadence.

-

3 — Optimized: cycle time is actively reduced; automation exists with governance; KPI learning loops continuously reshape priorities.

Red flags that signal ops debt (and what it costs)

-

“We publish a lot, but can’t tell what’s working.” Cost: wasted content spend and slow learning.

-

Work stalls in editing or dev with no SLA. Cost: longer time-to-impact and missed seasonal windows.

-

Reporting doesn’t change the backlog. Cost: repeating the same outputs regardless of results.

-

No refresh system. Cost: content decay and compounding losses across the library.

-

Rankings are the only shared KPI. Cost: leadership mistrust and fragile prioritization.

The SEO Ops Template for 2026 (copy/paste)

Use the templates below as-is. The fastest path is to standardize your system first, then refine.

A) Workflow stages + definitions (intake, brief, draft, visuals, publish, refresh)

Copy/paste workflow stages (adjust names to match your tools):

-

Intake (Backlog)

-

Entry criteria: topic idea + target page type + primary intent

-

Exit criteria: scored and prioritized; owner assigned

-

-

Brief

-

Entry criteria: chosen topic + SERP snapshot + internal link targets

-

Exit criteria: acceptance criteria defined (sections, claims, sources, CTA, on-page requirements)

-

-

Draft

-

Entry criteria: approved brief

-

Exit criteria: draft meets scope, intent, and originality expectations

-

-

Edit

-

Entry criteria: complete draft

-

Exit criteria: clarity, structure, brand voice, and factual checks pass

-

-

Visuals

-

Entry criteria: edited draft + visual requirements (diagrams, screenshots, headers)

-

Exit criteria: visuals delivered with alt text notes and placement guidance

-

-

Publish Prep (SEO/Tech QA)

-

Entry criteria: final copy + visuals

-

Exit criteria: metadata, internal links, schema needs, and indexability checks complete

-

-

Publish

-

Entry criteria: QA passed

-

Exit criteria: live URL recorded; benchmark metrics captured

-

-

Measure

-

Entry criteria: benchmark captured

-

Exit criteria: added to weekly & monthly review; hypothesis logged

-

-

Refresh (Scheduled)

-

Entry criteria: decay detected or opportunity identified

-

Exit criteria: update shipped; change log recorded; before/after tracked

-

B) KPI tree template (leading → lagging indicators)

Copy/paste KPI tree (fill in targets per month/quarter):

Business Outcome (Lagging)

- Qualified organic conversions (or pipeline/revenue where applicable)

- Organic-driven sign-ups / leads / purchases (choose 1–2)

SEO Outcome (Mid)

- Non-branded impressions by topic cluster

- CTR on priority pages

- Share of top 3 / top 10 rankings by topic (not just single keywords)

- Indexation coverage for new/updated URLs

Execution Health (Leading)

- Content cycle time (brief → publish) median

- Publish velocity (# shipped/week)

- Refresh rate (% of library refreshed/month)

- QA pass rate (publish without rollback)

- Internal links added to priority pages/week

Best practice: pick 3–5 leading metrics that you can directly control, and review them weekly. Review mid and lagging monthly.

C) Weekly/Monthly cadence agenda templates

Weekly SEO Ops Standup (30–45 min)

-

Shipping plan: what goes live this week (new + refresh)

-

Blockers: editing, visuals, dev, approvals (assign owner + due date)

-

QA gates: what needs checks before publish

-

Internal linking: which priority pages get links this week

-

Leading KPIs: cycle time, velocity, QA pass rate (what’s drifting?)

Monthly SEO Learning Loop (60–90 min)

-

What moved: by topic cluster and page type

-

Why it moved: shipping log + hypothesis review

-

Double down: patterns to repeat next month

-

Stop doing: work that didn’t create signal

-

Refresh backlog: top opportunities and decays

-

System improvements: one ops constraint to fix (handoff, QA, measurement)

D) Dashboard requirements checklist (what must be visible)

-

Work-in-progress view: items by stage (brief, draft, edit, QA, publish)

-

Cycle time: median and 90th percentile (where work gets stuck)

-

Shipping log: what changed, when, and why

-

Topic cluster performance: impressions/CTR/traffic by cluster

-

Page-level outcomes: priority URLs with benchmarks and deltas

-

Refresh queue: pages due for update based on decay/opportunity

-

Executive summary: 3 insights + 3 actions (decision-ready)

Implementation roadmap: install best practices in 30 days

This rollout assumes you keep publishing while you improve the system. The goal is to reduce cycle time, increase visibility into work, and make measurement actionable.

Week 1: unify sources + define KPIs

-

Choose your single source of truth (where backlog, URL inventory, and shipping log live).

-

Define the KPI tree (leading/mid/lagging) and what “good” looks like.

-

Instrument basics: benchmark priority pages and establish a monthly reporting cut.

Week 2: standardize workflow + QA gates

-

Implement the workflow stages and exit criteria.

-

Adopt the RACI and set turnaround expectations (editing, visuals, dev).

-

Create a publish checklist for content, technical, and brand QA.

Week 3: automate repeatable steps + reduce cycle time

-

Templatize briefs, outlines, and acceptance criteria.

-

Reduce handoffs: batch reviews, standardize feedback, and limit rework loops.

-

Automate where safe (status updates, reminders, basic QA checks), but keep governance explicit.

Week 4: reporting loop + backlog prioritization

-

Run your first monthly learning loop using the KPI tree.

-

Turn insights into backlog changes (refreshes, internal links, consolidation, new content).

-

Pick one constraint to fix next month (the biggest throughput limiter).

If you want hands-on help implementing this with your team—workflow, measurement, and cadence included—consider a 30-day SEO operations pilot to close the operations gap as a structured way to install the system quickly without turning the quarter into a rebuild.

CTA: Book a 30-day pilot to implement this SEO ops framework

Use a focused 30-day window to reduce cycle time, create visibility across the workflow, and connect execution to decision-ready KPIs.

Common pitfalls (and how to avoid them)

Mistaking more content for better operations

Publishing more can hide systemic issues: unclear briefs, inconsistent QA, weak internal linking, and no refresh plan. Fix the system first so each piece has a measurable purpose.

-

Avoid it by: enforcing acceptance criteria in briefs and requiring KPI mapping per item.

Over-automating without governance

Automation without clear ownership can increase risk (brand claims, factual errors, thin duplication) and create noisy reporting.

-

Avoid it by: keeping QA gates explicit and maintaining a shipping log with rationale.

Measuring rankings without decision-ready KPIs

Rankings fluctuate and rarely explain what to do next. The right question is: what should we ship, refresh, or consolidate based on evidence?

-

Avoid it by: adopting a KPI tree and reviewing leading KPIs weekly, outcomes monthly.

Next step: choose your operating model (DIY vs system)

The template above is a strong DIY baseline. The decision is whether you want to run it manually (with docs, spreadsheets, and project tools) or treat SEO as an integrated operating system.

When a pilot makes sense

-

You have strategy clarity, but shipping is slow or inconsistent.

-

Handoffs are messy (writer → editor → visuals → dev → publish).

-

Reporting exists, but it doesn’t change decisions.

A pilot is a controlled way to install workflow, cadence, and KPI traceability without committing to a long rebuild.

When an SEO Operating System makes sense

-

You need a unified workflow across content, visuals, and publishing.

-

You want fewer manual handoffs and clearer operational visibility.

-

You’re ready to standardize and scale across a larger content program.

If you’re evaluating platforms rather than assembling a stack, see the Go/Organic SEO Operating System for unified workflows and publishing—built to help teams close the Operations Gap by unifying workflow, automating repeatable steps, and keeping measurement connected to what ships.

CTA: See the SEO Operating System that unifies content, visuals, and publishing

For teams that want an operational layer—workflow + publishing + visibility—without relying on disconnected tools and manual reporting.

FAQ

What are SEO ops best practices for 2026 (in one sentence)?

In 2026, SEO ops best practices mean running SEO like an operating system: a unified source of truth, standardized workflows, clear ownership, and KPIs that connect execution to measurable business outcomes.

How do I know if my team has an SEO operations problem or a strategy problem?

If strategy is clear but delivery is slow, inconsistent, or hard to measure (missed deadlines, unclear handoffs, reporting that doesn’t change decisions), it’s usually an operations gap; if delivery is consistent but results don’t improve, revisit strategy and targeting.

What KPIs should SEO operations track in 2026?

Track a KPI tree: leading indicators (cycle time, publish velocity, indexation coverage, content refresh rate), mid indicators (impressions, CTR, non-branded rankings by topic), and lagging indicators (qualified organic sessions, conversions, revenue or pipeline where applicable).

What’s the minimum cadence for a high-performing SEO ops program?

A weekly execution cadence (backlog, blockers, publish plan, QA) plus a monthly learning loop (what moved, why, what to double down on, what to stop) is the minimum to keep speed and accountability without thrash.

How do we reduce SEO content cycle time without sacrificing quality?

Define workflow stages and QA gates, assign a RACI for each stage, standardize briefs and acceptance criteria, and automate repeatable steps—while keeping editorial and brand checks as explicit checkpoints.