Best SEO Workflow Automation Software (Framework)

Best SEO Workflow Automation Software for Growth Teams: An Evaluation Framework + Scorecard Template

“SEO workflow automation software” gets marketed like it’s just task automation (move cards, assign due dates, send reminders). But growth teams don’t lose time on reminders—they lose time in the gaps between tools, people, and proof of impact.

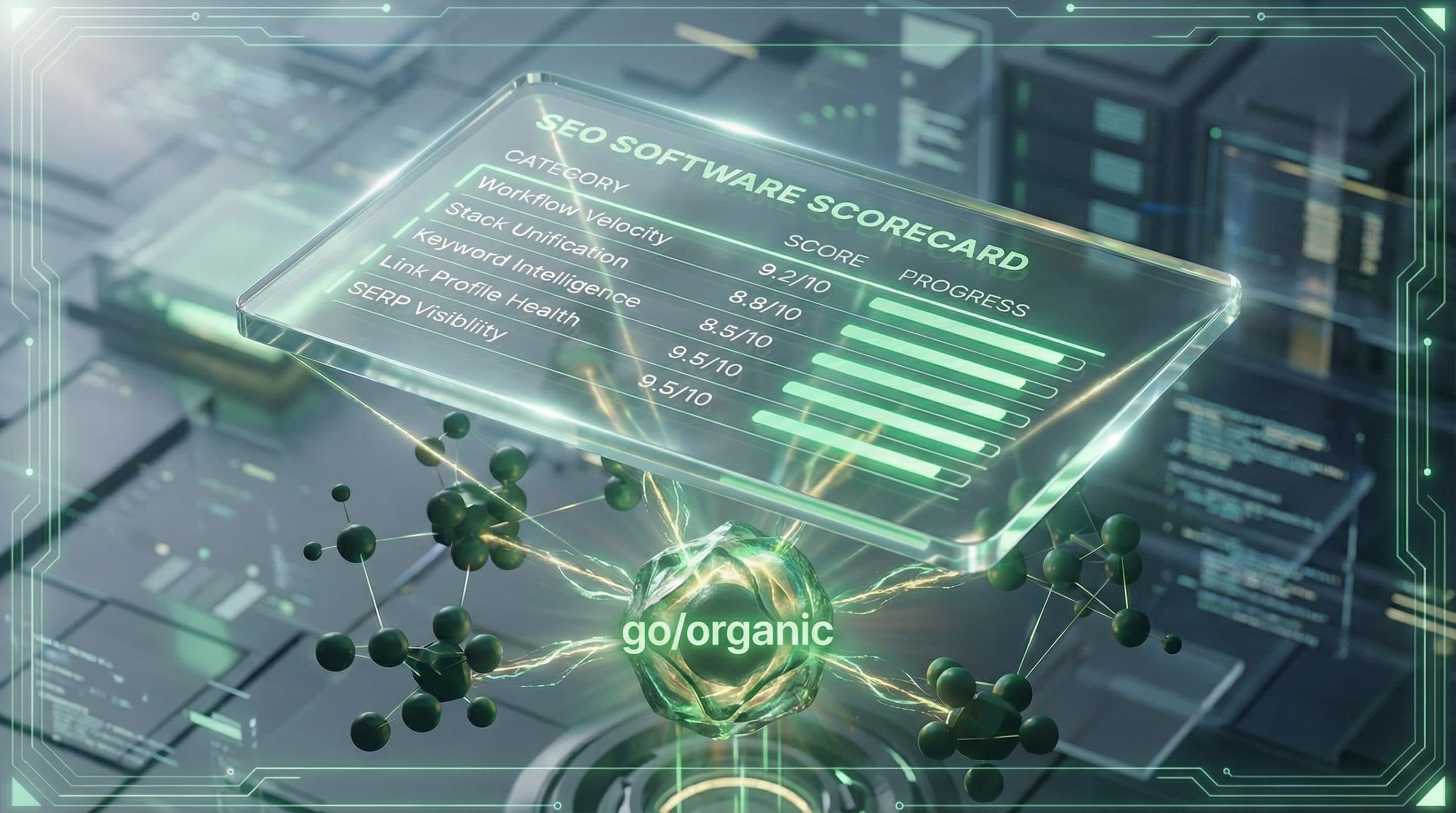

This guide gives you a practical way to evaluate the best SEO workflow automation software for growth teams without falling into a vendor listicle. You’ll get (1) a clear definition of what to automate, (2) the three realistic solution paths, and (3) a copy/paste scorecard you can use in 30 minutes.

If you want the category-level decision framed clearly before you evaluate vendors, start with this SEO OS vs tools comparison for growth teams—it maps the tradeoffs you’re actually making (system vs stack vs outsourced execution) and helps you avoid buying “another tool” that doesn’t close the operations gap.

What “SEO workflow automation” actually means for growth teams (not just task automation)

For a growth team, workflow automation isn’t about making individual tasks faster. It’s about turning a repeatable process (idea → publish → learn) into a reliable system that reduces handoffs, standardizes quality, and makes ROI visible.

The workflow stages to automate (idea → draft → visuals → publish → measure)

-

Idea: deciding what to create and why (opportunity, intent, priority).

-

Draft: producing a first version that meets your on-page and editorial standards.

-

Visuals: getting images/graphics into a publish-ready state without blocking the queue.

-

Publish: moving from “approved” to “live” with minimal manual copy/paste and QA.

-

Measure: tying shipped work to outcomes (rankings/traffic/leads/sales) and feeding learnings back into planning.

Automation is real when it reduces the number of tools, tabs, handoffs, and manual QA steps required to move through these stages.

The real bottleneck: the Operations Gap (handoffs, silos, manual QA, unclear ROI)

Most teams don’t fail because they can’t come up with content ideas. They fail because execution happens across disconnected tools and roles:

-

SEO research lives in one place, briefs in another, drafts in another, images in another.

-

Publishing requires a specialist who knows the CMS quirks and does repetitive QA.

-

Reporting is assembled manually, and attribution is debated instead of decided.

This is the Operations Gap: the distance between “we know what to do” and “we can ship it consistently and prove it worked.” Your software decision should be judged by how much of that gap it closes.

The 3 solution paths you’re really choosing between

When teams say they’re evaluating “SEO workflow automation software,” they’re usually choosing between three approaches:

SEO Operating System (unified stack + workflow automation + measurement)

An SEO Operating System (OS) is designed to unify your workflow end-to-end—so the output of one stage becomes the input of the next without fragile handoffs. The value is fewer moving pieces, clearer ownership, and faster iteration.

Point tools (best-of-breed, but integration + handoff overhead)

Point tools can be excellent at a single job (research, writing, reporting, project management). The tradeoff is the operational overhead of connecting them: integrations, permissions, QA standards, “where does the latest version live?”, and training across tools.

Agencies (outsourced execution, but less internal compounding + visibility)

Agencies can remove capacity constraints quickly. The tradeoff is often reduced day-to-day visibility, less internal system-building, and a harder time compounding learnings into a repeatable workflow owned by your team.

Evaluation framework: 7 criteria that predict speed + ROI (with scoring guidance)

Use these criteria to evaluate software (or an agency) based on whether it closes your Operations Gap. Score each criterion from 1–5:

-

1 = adds overhead or requires heavy custom ops to work

-

3 = workable with manual steps and clear owners

-

5 = reliably reduces handoffs and makes outcomes easier to measure

1) Stack unification (CMS + data sources) and single source of truth

Ask: Where does the “truth” live? If strategy, drafts, assets, publishing status, and performance live in different systems, you’ll manage coordination forever.

-

Score 5 if the workflow is unified enough that team members don’t need multiple systems to move work forward.

-

Score 3 if it works but requires consistent manual syncing.

-

Score 1 if it increases fragmentation (another dashboard with partial context).

Integration reality check: verify what is actually connected versus “possible.” For Go/Organic specifically, WordPress, WooCommerce, and Bing Webmaster Tools are connected; Google Search Console and Shopify are not connected.

2) Workflow velocity (how many steps become “one flow” vs manual handoffs)

Ask: How many handoffs does this remove? Velocity comes from fewer approvals, fewer copy/paste moments, and fewer “waiting on X.”

-

Look for clear stage definitions (e.g., brief → draft → review → publish → refresh).

-

Check if the software supports repeatable playbooks (not just one-off projects).

3) Content production support (drafting + optimization support)

Ask: Does it help you ship content that meets your standards without adding edits?

-

Support for structured briefs and on-page requirements reduces rework.

-

Quality improves when guidelines are baked into the workflow, not stored in a separate doc.

4) Visual operations (image creation workflows that don’t slow publishing)

Visuals are a common hidden bottleneck. Ask:

-

Who owns images (designer, marketer, contractor)?

-

What’s the “definition of done” for visual assets (sizes, naming, accessibility text, brand constraints)?

-

Does the workflow keep images attached to the content item so they don’t get lost in Slack threads?

5) Publishing automation (how close to 1-click publishing you can get)

Publishing is where many teams fall back into manual labor. Evaluate:

-

How content moves into the CMS (copy/paste vs structured publishing).

-

How QA is handled (links, headings, metadata, images, formatting).

-

Whether publishing requires a single “CMS person” as a bottleneck.

6) Measurement that ties actions to outcomes (dashboards + ROI narrative)

Ask: Can we tell a clean story: what we shipped → what changed → what to do next? Growth teams need measurement that supports decisions, not just reporting.

-

Score 5 if the system makes it easier to connect outputs to outcomes and review performance on a cadence.

-

Score 1 if measurement still requires manual stitching across sources and debate about impact.

7) Governance: permissions, QA, repeatability, and playbooks

Automation breaks down when governance is unclear. Evaluate:

-

Permissions/roles: can the right people approve and publish without chaos?

-

QA checklists: are they embedded and repeatable?

-

Playbooks: can you clone a workflow for new initiatives, markets, or product lines?

CTA: Use the OS vs tools framework to pick your fastest path to ROI

Example: how to apply the framework to OS vs tools (without a tool listicle)

Don’t start by asking “which tool is best?” Start by asking which operating model fits your constraints. If you’re actively weighing approaches, use this page to compare an SEO Operating System vs point tools in a way that maps to your workflow and reporting needs.

When an SEO OS wins (speed, fewer handoffs, clearer measurement)

-

You’re running cross-functional production (SEO + content + design + web) and coordination is the bottleneck.

-

You want a repeatable system for shipping and learning, not a collection of expert-only processes.

-

You need clearer visibility into what work is driving outcomes.

When point tools win (specialized needs, existing strong ops layer)

-

You already have strong operational discipline (PM, templates, QA checklists, clear ownership).

-

You have specialized requirements in one area and you’re willing to pay the integration tax.

-

Your team has time to maintain workflows across multiple systems.

When an agency wins (temporary capacity gap, execution-only needs)

-

You have a short-term content throughput goal and internal capacity is the only issue.

-

You’re comfortable trading internal compounding for outsourced execution speed.

-

You can define requirements clearly enough to avoid strategy-by-deliverable.

Implementation checklist: your first automation playbook (week 1–2)

Once you’ve chosen a direction, the fastest way to prove value is a single “first playbook” that ships real work and produces a measurable baseline.

Unify your stack (connect CMS + available data sources)

-

Pick one content type for the pilot (e.g., blog posts or refreshes).

-

Confirm what your system can actually connect today (avoid assumptions).

-

Decide the single source of truth for: status, owner, draft, assets, publish date.

Automate the workflow (from idea to published with fewer handoffs)

-

Define stages and “definition of done” for each stage.

-

Embed QA as a checklist, not tribal knowledge.

-

Set a rule for approvals (who approves what, by when).

-

Run one full cycle end-to-end with 3–5 pieces of content.

Measure what matters (baseline + reporting cadence)

-

Pick a baseline date and capture current performance (even if imperfect).

-

Decide on a review cadence (weekly for production, monthly for outcomes).

-

Write the “ROI narrative” you want reporting to answer (e.g., what shipped, what moved, what to do next).

Next step: validate fit and cost

What to ask on a demo / trial to confirm workflow automation claims

-

Show me a full run: idea/brief → draft → visuals → publish → measure (not separate features).

-

Where do the handoffs disappear? Name the steps that become automatic or standardized.

-

What does “week 1” look like? What will be live, connected, and shipping within two weeks?

-

What requires manual work? Ask for a candid list of what still needs humans.

-

How is measurement produced? What’s the default reporting cadence and who owns it?

-

What is not integrated? Confirm data source and CMS limitations up front.

Pricing and rollout considerations (seats, usage, integrations, support)

Once you’ve validated the workflow, align cost to your volume and rollout plan. Review Go/Organic pricing and rollout options to sanity-check seats, implementation expectations, and how quickly you can scale beyond the pilot.

CTA: Review pricing to confirm fit for your team’s volume and workflow

FAQ

What is SEO workflow automation software?

It’s software that reduces manual handoffs across the SEO production lifecycle—planning, drafting, creating visuals, publishing to your CMS, and measuring outcomes—so a growth team can ship faster and see what work drives results.

How is an SEO Operating System different from SEO tools?

Tools typically solve a slice of the workflow (research, writing, reporting, etc.). An SEO Operating System is designed to unify the stack, automate the end-to-end workflow, and connect operational actions to measurable outcomes—reducing the “operations gap” created by disconnected tools.

What criteria matter most when evaluating SEO automation for growth teams?

Prioritize stack unification, workflow velocity, publishing automation, and measurement that ties actions to outcomes. These determine whether automation actually increases throughput and clarifies ROI, rather than adding another tool to manage.

Should we use an agency instead of automation software?

Agencies can be a good short-term capacity solution, but they may not close internal workflow gaps or build repeatable systems. If your bottleneck is operational speed and visibility, evaluate whether a unified system can compound results in-house.

How do we avoid buying another tool that doesn’t get adopted?

Run a scorecard-based evaluation that requires evidence for each criterion (e.g., how publishing works, how measurement is reported, what gets automated). Also confirm who owns the workflow internally and what “done” looks like in week 1–2.