Enterprise SEO Data Unification Platform Playbook

Enterprise SEO Data Unification Platform Playbook: How Publishing Teams Replace Tool Sprawl with an SEO Operating System

Enterprise publishing teams don’t lose to competitors because they “lack SEO tools.” They lose because execution breaks at scale: data is scattered, handoffs multiply, and nobody can reliably connect what got published to what moved performance. That’s the Operations Gap—and it’s exactly what an SEO data unification platform for an enterprise publishing team is meant to close.

If you’re deciding whether to buy a unified platform (vs. keep adding point solutions), start with the decision logic in our SEO Operating System vs SEO tools comparison. Then use the playbook below to evaluate vendors, align stakeholders, and build an internal justification that’s about operational outcomes—not tool features.

The problem you’re actually solving: the Operations Gap (not “more SEO tools”)

In enterprise publishing, SEO success is an operations problem disguised as a tooling problem. When the workflow depends on humans reconciling spreadsheets, dashboards, and CMS states, you don’t have an SEO program—you have a fragile chain of manual steps.

Symptoms in enterprise publishing teams (tool sprawl, manual handoffs, unclear ROI)

-

Tool sprawl: keyword research here, content briefs there, editorial status in the CMS, reporting in BI, and “the truth” in someone’s spreadsheet.

-

Manual handoffs: SEO → editorial → writers → editors → uploaders → QA, with status updates living in multiple places.

-

Inconsistent execution: templates, internal linking rules, and on-page standards vary by team or brand because enforcement is manual.

-

Reporting that can’t drive decisions: you can see outcomes (traffic/rankings) but can’t confidently tie them to specific operational actions (what changed, when, by whom).

-

Slow publishing velocity: not because people aren’t working—because the system forces repeated rework and reconciliation.

Why “data unification” fails when it’s only dashboards (no workflow + publishing loop)

Many “unification” approaches stop at visualization: pull multiple sources into a dashboard and call it a single source of truth. But if your workflow does not run on that unified data, nothing changes operationally. The dashboard becomes another destination teams check (sometimes), while execution still happens elsewhere.

Real unification closes the loop: unified data informs planning, planning drives publishing actions, and publishing actions are captured and reflected back into measurement.

What an SEO data unification platform is (and what it isn’t)

Definition for enterprise publishing teams: single source of truth + operational loop

An SEO data unification platform for an enterprise publishing team is a system that:

-

Unifies key data sources into a reliable single source of truth (not just a view).

-

Runs the publishing workflow on that unified foundation (planning → production → publish → update).

-

Measures what matters by connecting operational actions (publishing/updates and workflow steps) to outcomes you can report.

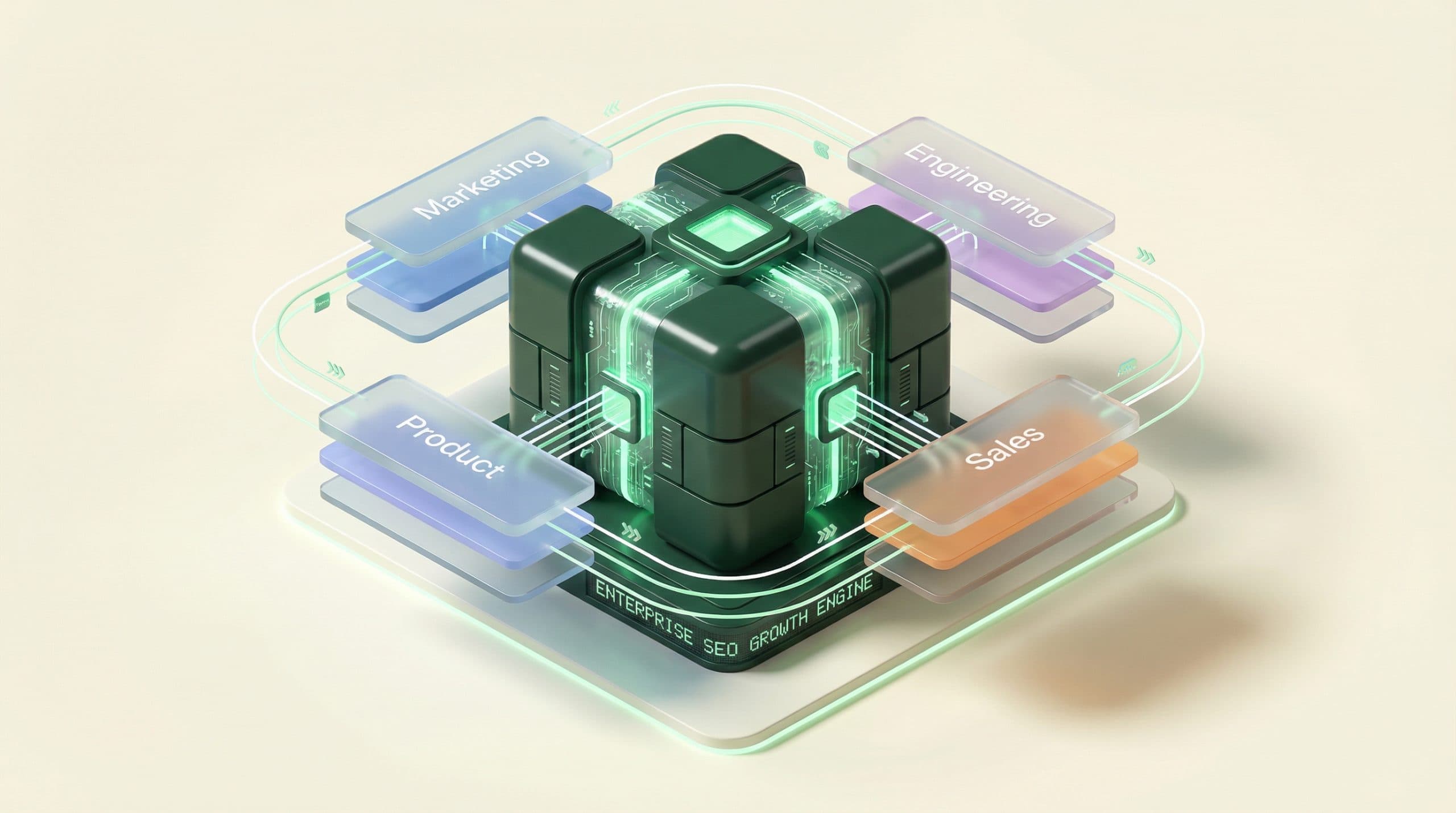

In Go/Organic terms, this is the difference between a pile of tools and an SEO Operating System that functions as a Growth Engine: Unify Your Stack, Automate Your Workflow (Velocity Engine™), and Measure What Matters as one operational loop.

Not a listicle of tools; not just reporting; not just content generation

-

Not a tool roundup: you’re not optimizing individual tasks; you’re designing a system that scales.

-

Not just reporting: dashboards don’t fix handoffs, governance, or execution speed.

-

Not just content generation: publishing teams need repeatable operations, standards, QA, and accountability—across humans and systems.

The evaluation playbook (use this checklist in your buying committee)

Use the following checklists to run a procurement-friendly evaluation. Each section includes pass/fail criteria so you can separate “nice-to-have” from “operationally necessary.”

Checklist A — Unify your stack (single source of truth)

-

Pass/Fail: Does the platform create a single source of truth that the workflow actually uses (not just a dashboard layer)?

-

Pass/Fail: Are integrations real and current (connected today), not “possible via custom work”?

-

Pass/Fail: Can you map entities consistently (site → section → URL/page → topic/cluster → owner)?

-

Pass/Fail: Can you reconcile content state across systems (what exists, what’s drafted, what’s live, what’s stale)?

-

Pass/Fail: Can you track changes over time (what changed, when, by whom) so measurement isn’t guesswork?

Reality check on integrations: don’t let vendor demos imply connections that aren’t live for your team. For example, a platform might support WordPress and Bing Webmaster Tools today, while other sources (like Google Search Console or Shopify) may depend on current product status or roadmap. Your evaluation should require clarity in writing.

Checklist B — Automate your workflow (velocity from idea → publish)

-

Pass/Fail: Does the platform standardize workflows across teams (brief → draft → edit → QA → publish → update) with clear stages?

-

Pass/Fail: Does it reduce handoffs (fewer “copy into doc,” “update the sheet,” “paste into CMS” steps)?

-

Pass/Fail: Does it support ownership (who is responsible for the page, the update, the result)?

-

Pass/Fail: Can you enforce standards (templates, on-page checks, internal link rules) as part of the workflow?

-

Pass/Fail: Can editorial ops run it day-to-day without a data analyst mediating every request?

Checklist C — Measure what matters (connect ops actions to ROI)

-

Pass/Fail: Can you attribute performance movement to operational actions (publish, refresh, optimization tasks), not just “time passed”?

-

Pass/Fail: Does it report at the level leadership needs (portfolio, brand, section, topic/cluster, page) without custom one-off queries?

-

Pass/Fail: Can you create a repeatable reporting cadence (weekly/monthly) that doesn’t collapse into manual reconciliation?

-

Pass/Fail: Can you define leading indicators your team controls (workflow throughput, refresh cadence, QA completion) alongside lagging indicators (traffic, rankings)?

Checklist D — Governance & enterprise readiness (roles, QA, auditability)

-

Pass/Fail: Role-based access: can you separate responsibilities for SEO, editorial, writers, and stakeholders?

-

Pass/Fail: Auditability: can you review what changed and who approved it?

-

Pass/Fail: QA guardrails: can you prevent “publish now, fix later” as the default mode?

-

Pass/Fail: Scalability: can the system support multiple sites/brands/sections without fragmenting into separate processes?

CTA: Want to pressure-test your current stack against these checklists? Compare SEO OS vs tools (use the decision rules).

Proof narrative: how to justify the platform internally (CFO/CMO/Editorial)

A BOFU buyer usually needs more than a feature list—they need an internal story that explains why this spend is operationally necessary and how it reduces risk.

The before/after story: from fragmented execution to a Growth Engine

Before: Teams ship content, but execution quality varies. The “truth” is spread across docs, tools, and the CMS. Reporting is delayed, and SEO becomes reactive because it’s unclear what actions actually drove outcomes.

After: A unified platform becomes the operational layer: planning, production, publishing, and measurement run in one loop. SEO and editorial share a common operating view, and leadership gets reliable reporting tied to execution—not anecdotes.

The risk story: what breaks when you scale with disconnected tools

-

Scaling risk: adding more writers or sites increases coordination cost faster than output.

-

Governance risk: standards drift and QA becomes inconsistent.

-

Measurement risk: you can’t defend budget because ROI is hard to attribute to actions.

-

Operational risk: key workflows exist in people’s heads; turnover creates resets.

The measurement story: what you can finally attribute and report

-

What shipped: what was published/updated, when, and under which initiative.

-

What changed: optimizations, refreshes, and QA steps captured as operational events.

-

What moved: performance trends reported in the context of what your team did.

-

What to do next: operational priorities informed by unified data (not scattered opinions).

OS vs tools: decision rules (when tools are enough vs when you need an OS)

Your decision isn’t “platform vs tools” in the abstract—it’s whether your current approach can reliably produce outcomes as you scale.

If you have these conditions, tools can work (for now)

-

One site (or a small set of pages) with limited publishing volume

-

A small team where handoffs are minimal and informal communication works

-

Light governance requirements and low cross-functional complexity

-

Reporting expectations are basic and don’t require attribution to specific actions

If you have these conditions, you need an SEO Operating System

-

Multiple teams/brands publishing at scale

-

Frequent handoffs between SEO, editorial, and production

-

Content lifecycle work (refreshes, pruning, QA) is as important as net-new publishing

-

Leadership expects reliable reporting tied to execution and accountability

-

Tool sprawl is already creating duplicate work and conflicting “sources of truth”

If you’re at that inflection point, use the comparison page to evaluate whether an SEO OS beats a stack of point tools before you commit to another year of workflow debt.

Implementation checklist (first 30 days)

This is a practical rollout plan you can use internally. Adjust sequencing based on your CMS, governance, and current reporting cadence.

Week 1: map sources + workflows + owners

-

Inventory your current data sources (CMS, webmaster tools, commerce if applicable, analytics/BI)

-

Document the current publishing workflow stages (including who owns each stage)

-

List the manual reconciliations that slow you down (spreadsheets, copy/paste steps, status chasing)

-

Define the minimum entities you must unify (site/section, page/URL, topic/cluster, owner, status)

Week 2: connect CMS + data sources; define the single source of truth

-

Connect the CMS (e.g., WordPress) where applicable

-

Connect available SEO data sources you will rely on (e.g., Bing Webmaster Tools)

-

Define “truth rules” (which system owns what field; how conflicts are resolved)

-

Validate data coverage and gaps (what’s missing, what’s delayed, what’s not captured)

Week 3: standardize production workflow; reduce handoffs

-

Standardize stages and definitions of done (brief ready, draft ready, edit complete, QA complete, publish)

-

Establish templates/checklists for repeatable content types

-

Assign ownership so work doesn’t stall in “someone will handle it” limbo

-

Reduce duplicate tracking systems (retire or freeze at least one spreadsheet/status board)

Week 4: launch unified dashboard and ROI reporting cadence

-

Define reporting views for leadership vs operators (portfolio vs queue)

-

Implement a weekly operations cadence (throughput, bottlenecks, QA rates)

-

Implement a monthly outcomes cadence (performance trends tied to shipped work)

-

Document “how we measure” so reporting survives team changes

What to ask vendors (copy/paste questions)

Use these questions to force clarity during demos and security/procurement review. The goal is to confirm you’re buying an operational system, not another reporting layer.

Data & integrations questions (what’s truly connected vs “possible”)

-

Which sources are integrated today (live, supported, documented) vs “available via custom work”?

-

Are integrations one-way or two-way? What data can the platform write back to the CMS (if any)?

-

How do you handle identity resolution (URL canonicalization, page variants, sections, multiple sites)?

-

How do you handle data freshness and delays? What’s the typical update cadence per source?

-

What happens when we change taxonomy, URL structures, or migrate content?

Workflow automation questions (where velocity is real vs manual)

-

Show the exact workflow from topic selection to publish—where does the work actually happen?

-

Which handoffs are eliminated vs simply “tracked”?

-

How do you enforce QA and standards (checklists, approvals, required fields)?

-

How do roles work for SEO, editors, writers, and stakeholders?

-

What does a typical editorial ops user do daily/weekly inside the system?

Measurement questions (how actions map to outcomes)

-

How does the platform capture operational actions (publish, refresh, optimization tasks) as events?

-

How do you connect those events to reporting so we can explain performance movement?

-

Can we report by section/topic/initiative and see what shipped in that period?

-

What’s the recommended cadence for reporting and decision-making?

-

How do you prevent “vanity reporting” and keep measurement tied to controllable actions?

Next step: compare OS vs tools and validate pricing fit

If the checklists above describe your reality—tool sprawl, workflow friction, and unclear attribution—you’re likely past the point where adding another point tool helps. The practical next step is to confirm that an Operating System model matches your constraints (workflow, governance, measurement) and then validate commercial fit.

-

Use the comparison to align your buying committee: SEO Operating System vs SEO tools comparison.

-

Then self-qualify quickly on budget and packaging: Go/Organic pricing for enterprise publishing teams.

CTA: Ready to move from evaluation to a concrete decision? Review pricing and see what plan fits your team.

FAQ

What is an SEO data unification platform for enterprise publishing teams?

It’s a system that connects your CMS and SEO data sources into a single source of truth and then uses that unified foundation to run the publishing workflow (from planning to publishing) while tying operational actions to measurable outcomes. The key difference vs reporting tools is that unification is connected to execution, not just dashboards.

How is an SEO Operating System different from an SEO tool stack?

A tool stack typically optimizes individual tasks (research, writing, reporting) but leaves handoffs, data reconciliation, and accountability to humans. An SEO Operating System is designed to close the Operations Gap by unifying data, automating workflow velocity, and measuring what matters in one operational loop.

What proof should I bring to stakeholders to justify a data unification platform?

Bring a narrative that links operational friction to business risk: slower publishing velocity, inconsistent execution, and unclear ROI. Then show the counterfactual: a unified stack + automated workflow + unified measurement cadence that reduces manual work and makes performance attributable to actions.

When are point tools enough—and when do you need a unified platform?

Point tools can be enough when volume is low, workflows are simple, and reporting needs are lightweight. You likely need a unified platform when multiple teams publish at scale, handoffs are frequent, data lives in silos, and leadership expects reliable growth reporting tied to execution.

What should I ask vendors to confirm they truly unify data (not just visualize it)?

Ask what sources are connected today vs “possible,” whether integrations are two-way, how the system handles governance and auditability, and how workflow actions (publishing, updates, production steps) are captured and reflected in performance reporting.