SEO OS vs SEO Tools: Checklist to Choose Right

SEO Operating System vs SEO Tools: The BOFU Checklist to Close the Operations Gap

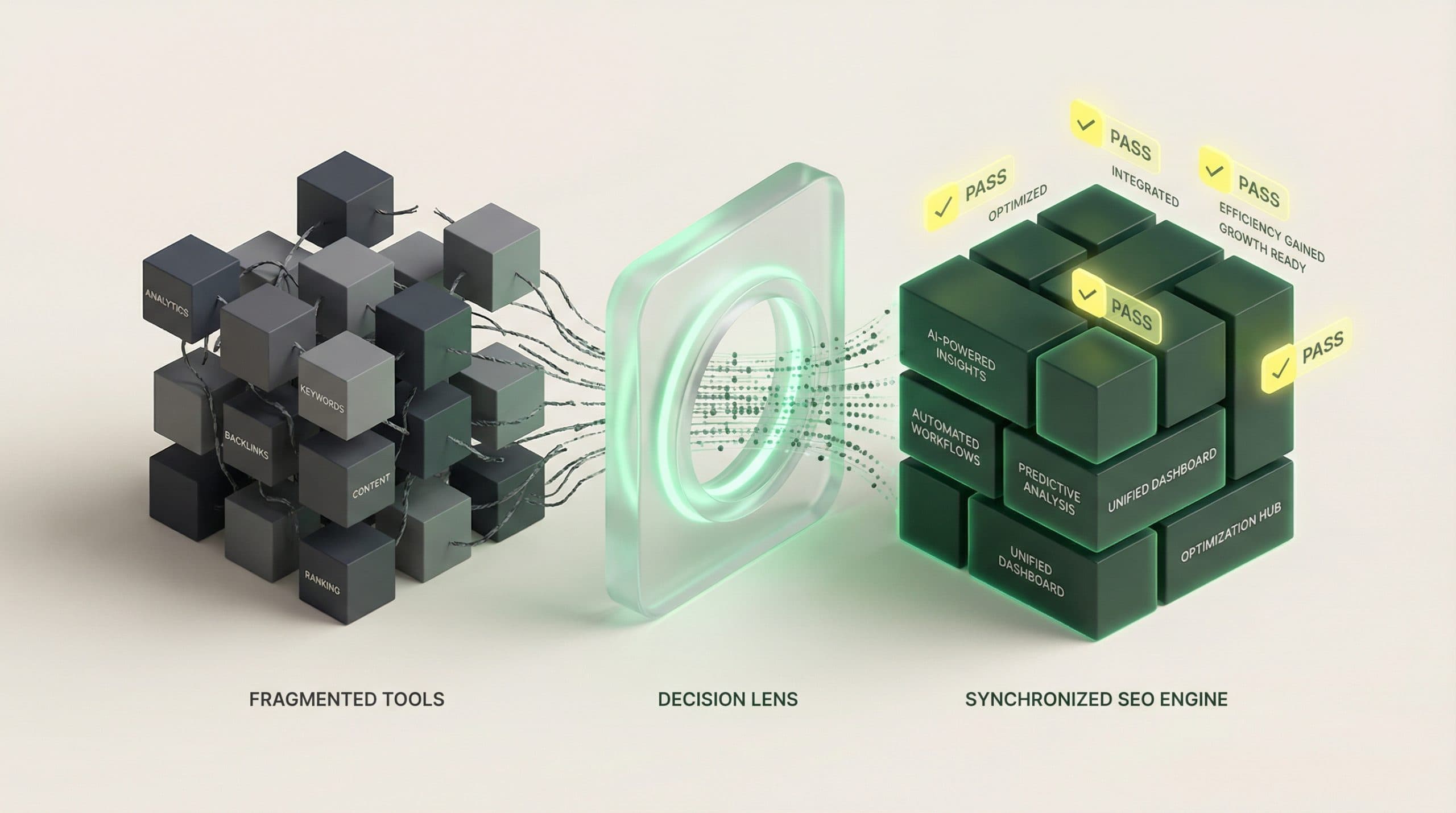

Most BOFU “platform vs tools” decisions get framed as a feature debate: rank tracking vs audits, keyword research vs dashboards, AI writing vs content briefs. But for a Head of SEO/Growth, the question that actually determines ROI is operational: does your stack run the work end-to-end, or does it just support pieces of it?

That distinction is where the Operations Gap lives—between insights and execution, between content created and content shipped, and between tasks completed and outcomes measured.

If you’re actively evaluating an SEO Operating System vs SEO tools, start with the SEO Operating System vs SEO tools comparison hub for a product-led view. Then use the playbook below to self-qualify quickly and make a decision you can defend with workflow and ROI logic (not tool opinions).

The real comparison: features vs operations

Tools can be excellent. The problem is that most SEO programs don’t fail because the team lacks a specific feature—they fail because execution becomes a chain of handoffs across disconnected systems, with no single source of truth.

What most teams mean by “SEO tools” (point solutions + dashboards)

In practice, an “SEO tools” approach usually looks like:

-

Point solutions for research, auditing, and tracking

-

Docs/spreadsheets for briefs, SOPs, approvals, and QA

-

Design tools for visuals

-

A CMS for publishing

-

Dashboards/reports built after the fact to explain results

This can work—especially when volume is low and ownership is crystal clear. The hidden cost shows up when you try to scale throughput, quality, and reporting at the same time.

What an “SEO Operating System” changes (single workflow + single source of truth)

An SEO Operating System is built to run the operation, not just inform it. The goal is to reduce (or eliminate) manual handoffs by unifying the workflow and the data behind it.

Conceptually, an SEO OS prioritizes:

-

Single workflow: idea → draft → visuals → publish (with governance)

-

Single source of truth: operational data + performance data tied to the work

-

Velocity by design: cycle time shrinks because steps are connected

-

ROI narrative: you can explain what you shipped, why, and what it produced

For Go/Organic specifically, that OS framing maps to capabilities like a Connectivity Suite, Content Engine, Visual Operations Suite, Publishing Engine, Velocity Engine™, and a unified dashboard/ROI narrative—without requiring you to duct-tape every step together manually.

Proof narrative: how tool stacks create the Operations Gap

The Operations Gap isn’t theoretical. It shows up as measurable operational drag. Here are the most common failure modes when teams rely on stacks of tools plus manual coordination.

Symptom 1 — velocity slows (handoffs, rework, approvals)

Velocity dies in the transitions:

-

Brief written in one place, interpreted in another

-

Draft created, then rewritten after SME review because requirements weren’t clear

-

Visuals requested late, delaying publish

-

On-page QA happens after upload, forcing another revision loop

Operational tell: time-to-publish is measured in weeks, even for “simple” updates.

Symptom 2 — quality drifts (inconsistent briefs, visuals, on-page standards)

Even strong teams struggle to maintain consistency when standards live in scattered docs and tribal knowledge. Common drift points:

-

Inconsistent intent mapping and page structure

-

Uneven internal linking and on-page requirements

-

Visual quality varies by contributor and deadline pressure

-

Publish checks happen too late (or not at all)

Operational tell: rework rate climbs, and “why didn’t this perform?” becomes a recurring post-mortem question.

Symptom 3 — ROI gets fuzzy (data silos, attribution arguments)

When execution lives in one set of tools and performance lives in another, reporting turns into reconciliation:

-

What shipped this week (and what changed) is hard to summarize

-

Performance changes don’t map cleanly to specific actions

-

Stakeholders debate attribution instead of approving more investment

Operational tell: the team can show activity, but struggles to tell a credible ROI story without hours of manual work.

The decision checklist (use this to choose OS vs tools)

Use the checklists below like a BOFU scorecard. If you’re mostly answering “no,” tools may be enough. If you’re repeatedly answering “not reliably,” you’re looking at an operations problem—and an SEO OS becomes the more rational investment.

Checklist A — Workflow continuity (idea → draft → visuals → publish)

-

One workflow exists for ideation, outlining, drafting, visuals, and publishing (not five disconnected processes).

-

Handoffs are explicit (owners, due dates, QA gates), not informal Slack messages.

-

Approvals don’t reset work because requirements were captured upfront.

-

Publishing is part of the system, not a separate “last-mile scramble.”

Decision prompt: If your bottleneck is “getting content shipped,” your limiting factor isn’t another SEO report—it’s workflow continuity.

Checklist B — Stack unification (CMS + data sources into one source of truth)

-

Your CMS is connected to the operation (not just used at the end).

-

You can tie work items to URLs and keep that relationship intact through updates.

-

Data doesn’t require manual stitching to explain what happened.

-

Integrations match your reality (what’s connected vs what isn’t) and you have a plan for gaps.

Example integration reality check (because this matters operationally): Go/Organic connects with WordPress and WooCommerce, and also supports a connected path for Bing Webmaster Tools. It does not currently connect to Google Search Console or Shopify—so if your process depends on those being natively connected on day one, you’ll want to plan accordingly.

Checklist C — Automation & velocity (what can be done in minutes vs days)

-

Routine steps are automated (templated creation, repeatable QA, faster publishing flows).

-

Cycle time is visible (you can measure time-in-stage, not just “tasks completed”).

-

Throughput can scale without adding proportional meetings and coordinators.

-

Work is designed for velocity, not for “heroic” last-minute pushes.

If you’re actively comparing approaches, this is where it’s most useful to evaluate Go/Organic’s SEO OS vs a traditional SEO tool stack: can you move one content item from idea to published with fewer handoffs and less rework, while keeping standards intact?

Checklist D — Measurement that ties actions to outcomes (dashboard/ROI narrative)

-

Leading indicators are defined (time-to-publish, throughput/week, rework rate, QA pass rate).

-

Outcome metrics are defined (organic traffic/value, conversions/revenue where applicable).

-

You can explain causality credibly: what changed, when, and why that should affect performance.

-

Reporting is built into operations, not recreated monthly in spreadsheets.

Decision prompt: If you can’t connect “what we shipped” to “what we got,” stakeholders will eventually cap investment—regardless of tool quality.

Checklist E — Ownership & governance (who runs it, how standards are enforced)

-

There’s a single owner for the SEO operation (not just for isolated tasks).

-

Standards are enforceable via templates, QA, and publishing gates.

-

Roles are clear across SEO, content, design, and engineering.

-

Work is auditable: decisions, changes, and outputs can be reviewed.

CTA: Compare SEO OS vs SEO tools (and see what closes the Operations Gap)

Fit guide: when SEO tools are enough vs when you need an SEO OS

Tools are enough if… (low volume, simple workflow, clear ownership)

-

You publish infrequently (or mostly update a small set of pages).

-

One person can move work from brief to publish with minimal dependencies.

-

Your standards are simple and consistently applied.

-

Reporting is straightforward and doesn’t require cross-team reconciliation.

Decision prompt: If your main pain is “we need better insights,” tools may be the right next step.

You need an SEO OS if… (multi-step workflow, multiple stakeholders, scaling content)

-

Content moves through multiple people/teams (SEO → writer → SME → design → publisher).

-

Publishing is a bottleneck (CMS access, formatting, QA, approvals).

-

You need more throughput without sacrificing quality.

-

You’re spending too much time coordinating work and rebuilding reports.

Decision prompt: If your main pain is “we can’t ship fast enough with confidence,” an OS is usually the higher-leverage fix.

Implementation playbook: how to evaluate an SEO OS in 30 days

Don’t evaluate an SEO OS by clicking around features. Evaluate it by running one full workflow end-to-end and measuring operational deltas.

Week 1 — Unify your stack (connect CMS + available data sources)

-

Pick a representative content type (e.g., one new article or one revenue page refresh).

-

Connect the CMS you actually use (e.g., WordPress/WooCommerce if applicable).

-

Confirm which data sources are connected vs not (plan around gaps rather than assuming them away).

-

Define your baseline metrics: current time-to-publish, rework rate, and weekly throughput.

Week 2 — Automate workflow (from idea → illustrated → published)

-

Run one item through the content workflow using consistent templates.

-

Include visuals in-scope early (so you can test the real bottleneck).

-

Publish through the system (don’t export into a separate, manual process if the OS is meant to eliminate handoffs).

Week 3 — Establish operating standards (templates, QA, roles)

-

Define “done” with an operational checklist (intent met, on-page standards, internal links, visuals, metadata).

-

Assign owners per stage and create a repeatable approval path.

-

Track rework explicitly: what changed, why, and which stage caused it.

Week 4 — Measure what matters (dashboard that connects ops actions to ROI)

-

Compare baseline vs evaluation period: cycle time, throughput, rework rate, QA pass rate.

-

Build the narrative: what shipped, what changed on-page, and expected impact.

-

Set a measurement cadence (weekly ops + monthly outcome review) so the system stays accountable.

If the 30-day test shows meaningful cycle-time reduction (without quality loss), the next step is scoping rollout and budget—then you can review Go/Organic pricing and plans to estimate ROI and adoption path.

CTA: See pricing to estimate ROI and rollout scope

Common objections (and how to test them)

“All-in-one platforms are rigid” → test with one workflow end-to-end

This objection is often true for generic suites. The test isn’t “does it have every feature?” It’s:

-

Can you run your real workflow without creating parallel processes?

-

Can you enforce your standards while increasing shipping velocity?

-

Can the OS support governance (roles, QA, approvals) without slowing you down?

“We already have tools” → test the handoffs and time-to-publish

Keep your tools if they’re working. The question is whether the stack creates coordination overhead. Run a quick audit:

-

Count the handoffs from idea to publish.

-

Measure waiting time between stages.

-

Track how often work gets redone due to missing requirements.

If the majority of time is “waiting and rework,” adding another tool typically increases complexity rather than reducing it.

“Proving ROI is hard” → define leading indicators + outcome metrics upfront

ROI gets “hard” when measurement starts after publishing. Define it before you begin:

-

Leading indicators: time-to-publish, throughput, rework rate, QA pass rate

-

Outcomes: organic traffic/value, assisted conversions, revenue where applicable

Then require a single narrative that connects operational outputs (what shipped) to expected and observed outcomes.

Next step: run the comparison and pick the right path

If your evaluation is really about whether you can scale SEO like a growth engine, don’t decide based on feature checkboxes. Decide based on whether your approach closes the Operations Gap:

-

Fewer handoffs

-

Higher throughput

-

Consistent quality

-

Clear ROI narrative

Run the full OS vs tools comparison before you commit to another stack—and use the checklists above to pressure-test fit in a way stakeholders will respect.

FAQ

What is an SEO Operating System (SEO OS) vs SEO tools?

SEO tools are typically point solutions (research, tracking, auditing) that support parts of the job. An SEO Operating System is designed to run the end-to-end operation—unifying data, content creation, and publishing workflows so execution speed and measurement stay connected.

How do I know if my team has an “Operations Gap”?

If work moves through disconnected tools and manual handoffs (briefs in docs, drafts in another tool, images elsewhere, publishing in the CMS, reporting in spreadsheets), you likely have an Operations Gap. The tell is slow time-to-publish and unclear linkage between actions taken and results achieved.

Can’t I just add more SEO tools to fix the problem?

Adding tools can improve individual tasks, but it often increases coordination overhead. If the bottleneck is workflow continuity and measurement across steps, the fix is usually operational: unify the stack, automate handoffs, and measure outcomes in one place.

What should I test during a 30-day evaluation?

Test one complete workflow end-to-end: unify your CMS and available data sources, run the content workflow from idea to publish, and confirm you can measure leading indicators and outcomes without manual reconciliation.

What metrics matter most when comparing an SEO OS vs tools?

Track both velocity and outcomes: time-to-publish, throughput per week, rework rate, and consistency of on-page standards—then connect those operational metrics to organic performance and ROI reporting.