SEO Operations Gap: Definition, Symptoms, and a Playbook to Close It

“

SEO Operations Gap: What It Is, How to Diagnose It, and How to Close It

If your team publishes content but leadership still asks, “What did SEO actually deliver?” you’re likely dealing with an SEO Operations Gap. It’s not a motivation problem or a “write better content” problem—it’s an operations problem that slows execution and obscures ROI.

What you’ll learn:

-

A clear definition of the SEO Operations Gap (and how it differs from an SEO gap analysis)

-

The most common root causes: tools, workflow, and measurement

-

A diagnostic checklist + scorecard you can use this week

-

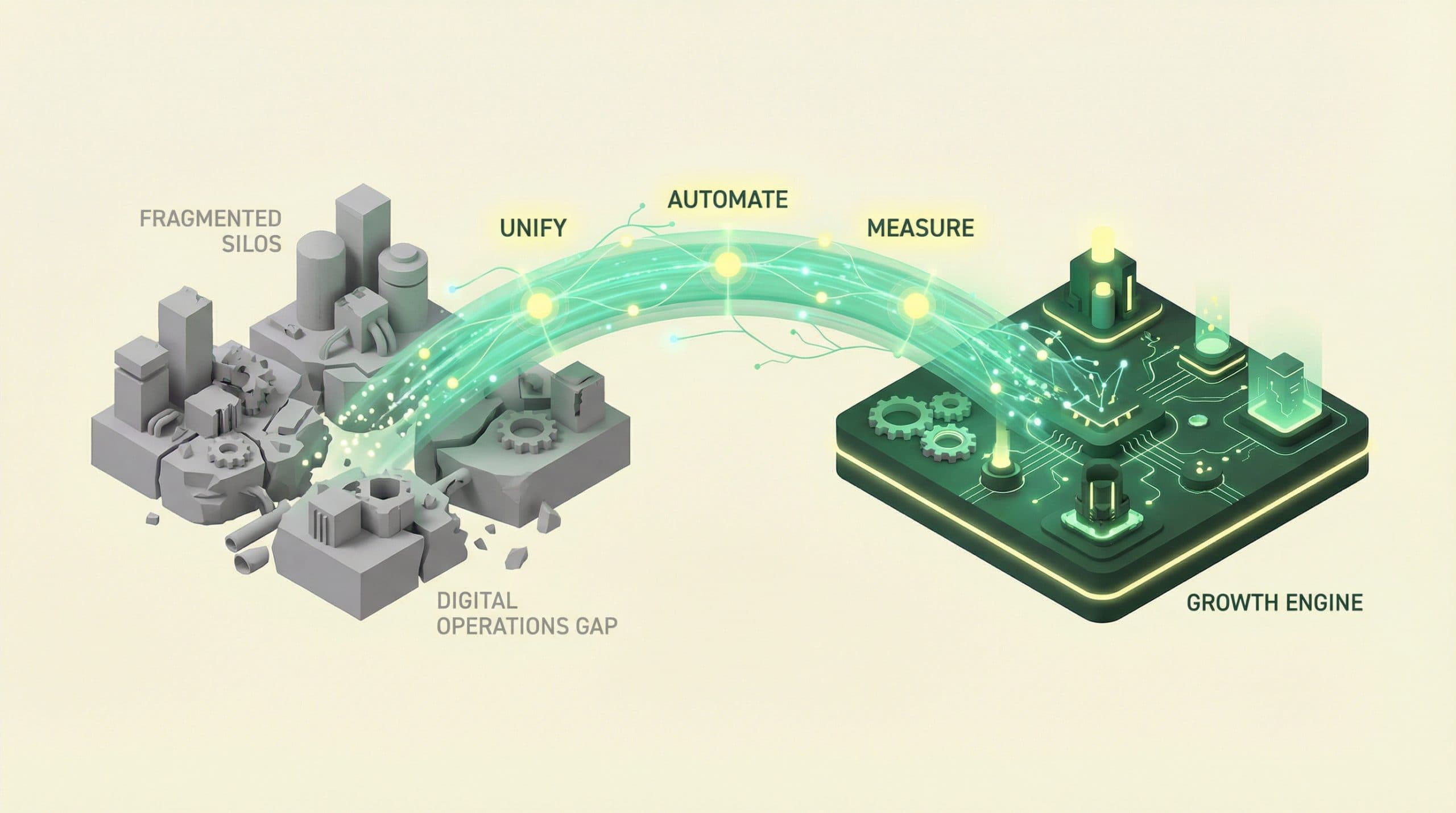

A practical framework to close the gap: Unify → Automate → Measure

-

Templates, checklists, and common pitfalls to avoid

What is the SEO Operations Gap?

The SEO Operations Gap is the disconnect between SEO work getting done (content creation, updates, publishing tasks, optimizations) and your ability to reliably produce and prove measurable organic results.

When the gap is open, SEO becomes:

-

Slow (ideas take weeks to ship)

-

Inconsistent (standards drift across writers, pages, and releases)

-

Hard to defend (no clean line from actions → outcomes → ROI)

This hub focuses on operations: the systems, workflow, and measurement that keep strategy from dying in spreadsheets, tickets, and handoffs.

SEO Operations Gap vs. “SEO gap analysis”

Many guides focus on SEO gap analysis (content gaps, keyword gaps, link gaps). Those are useful—but they don’t solve the core execution problem: turning decisions into shipped work and measurable impact. The SEO Operations Gap is about the machinery that makes results repeatable.

Why the SEO Operations Gap happens (common root causes)

Disconnected tools and data silos

SEO touches multiple systems (CMS, ecommerce platform, webmaster tools, analytics, reporting). When those systems don’t behave like a single source of truth, you get manual reporting, conflicting numbers, and delayed decisions.

Typical outcomes:

-

URL inventories that are always out of date

-

Performance insights locked in separate tools

-

“What changed?” investigations that take hours

Manual, multi-handoff workflows

Most teams run a multi-stage workflow (idea → brief → draft → visuals → upload → publish → optimize). The more handoffs and manual steps, the more cycle time expands—and the harder it is to maintain consistent quality.

Typical outcomes:

-

Publishing bottlenecks (someone becomes the single point of failure)

-

Duplicated work (rewrites, reformatting, missing assets)

-

QA inconsistency (metadata, internal links, images, schema, etc.)

Measurement that doesn’t connect actions to outcomes

When teams track outputs (articles shipped) but can’t tie operational actions to outcomes (rankings, traffic, conversions, revenue where applicable), prioritization becomes opinion-driven. Leadership loses confidence because ROI is hard to prove.

Symptoms: how to tell you have an SEO Operations Gap

Use the checklist below as a quick diagnostic. The more “yes” answers, the more likely operations—not strategy—is your growth constraint.

-

Time-to-publish is long: It regularly takes 2–6+ weeks from idea to live page.

-

Handoffs are unclear: Work gets stuck between writer, designer, SEO, and publisher.

-

On-page standards drift: Titles, meta descriptions, internal links, and image practices vary by author.

-

Work is duplicated: People reformat drafts, recreate briefs, or rebuild reports repeatedly.

-

Reporting takes days: Weekly/monthly SEO reporting requires manual exports and stitching.

-

Content updates don’t happen: Refreshing existing pages is ad hoc, not a planned sprint.

-

Requests are trapped in tickets: SEO fixes are queued indefinitely with no operational SLA.

-

It’s hard to answer “what changed?” You can’t quickly connect publishing actions to performance shifts.

SEO Ops Gap Scorecard (Velocity, Visibility, Verifiability)

Score each dimension from 1–5. Total possible: 15.

-

Velocity (1–5): How fast do ideas become published, optimized pages with consistent QA?

-

Visibility (1–5): Do you have a single source of truth for URLs, status, changes, and performance inputs?

-

Verifiability (1–5): Can you connect shipped actions (publish/update/optimize) to measurable outcomes and ROI?

Interpretation:

-

12–15: Strong SEO operations. Focus on scaling experiments and refresh loops.

-

8–11: Mixed. One weak area is likely throttling growth.

-

3–7: Operations are the constraint. Fix workflow + measurement before “more content.”

Operations Gap in one sentence: when your team is busy shipping SEO tasks, but your system can’t reliably turn that work into provable outcomes.

Want to close the SEO Operations Gap with a unified operating system?

Explore how an SEO Operating System can help unify your stack, automate the workflow from idea → illustrated → published, and connect operational actions to measurable results—without adding more manual steps.

See the SEO Operating System

The cost of the gap (what it does to growth)

Speed: slower iteration means fewer learning loops

SEO compounds through iteration: publish, measure, refresh, and improve. When operations are slow, you run fewer experiments, miss seasonal windows, and take longer to learn what works.

Quality: standards drift across writers, pages, and releases

Without a repeatable Definition of Done, on-page fundamentals become inconsistent: internal linking, image workflow, metadata quality, and publishing QA. That inconsistency makes performance harder to predict.

ROI: leadership loses confidence when attribution is unclear

If results can’t be tied back to actions, SEO becomes “hard to prove,” which often becomes “hard to fund.” The Operations Gap turns SEO into a cost center—regardless of the underlying opportunity.

A practical framework to close the SEO Operations Gap

This playbook follows a simple sequence used by high-performing teams: Unify → Automate → Measure. The goal is to turn SEO into a reliable operating system, not a collection of one-off projects.

Step 1: Unify your stack into a single source of truth

You don’t need fewer tools—you need your stack to behave like one system. Unification means your team can see, in one place:

-

What exists (URL inventory)

-

What’s changing (publish/update log)

-

What happened after (performance signals and outcomes)

Implementation artifacts:

-

Data inventory template

-

Source (CMS, ecommerce, webmaster tools, analytics)

-

Owner

-

Refresh cadence (daily/weekly/monthly)

-

Key fields you rely on

-

Where it lives (link)

-

-

Content inventory fields

-

URL

-

Primary intent

-

Status (planned/drafting/published/refresh-needed)

-

Last updated

-

Target query/topic

-

Internal links (to/from)

-

Notes (issues, hypotheses)

-

Start with unifying your data sources

If your biggest bottleneck is disconnected tools and data silos, start by connecting your CMS and key data sources into a single source of truth. Explore the Connectivity Suite.

Explore the Connectivity Suite

Step 2: Automate the workflow from idea → illustrated → published

Closing the gap requires reducing cycle time without sacrificing standards. Start by mapping your workflow end-to-end, then remove unnecessary handoffs and automate repeatable steps.

Common workflow stages to standardize:

-

Idea selection and prioritization

-

Brief creation

-

Drafting

-

Visual creation (images/illustrations)

-

On-page QA

-

Publishing

-

Post-publish checks (indexing, internal links, early performance)

Teams often lose the most time in brief approvals, visual production, and CMS uploading/publishing. The goal of a velocity-focused approach (like a Velocity Engine™ concept) is to compress those bottlenecks so shipping becomes routine.

Implementation artifacts:

-

SOP outline

-

Input: target query, intent, page type, internal link targets

-

Process: brief → draft → visuals → QA → publish

-

Output: published URL + change log entry

-

-

Definition of Done

-

Title + meta description drafted and reviewed

-

H1/H2 structure matches intent

-

Internal links added (at least X to relevant pages, and X from relevant pages if applicable)

-

Images added with descriptive file names + alt text where appropriate

-

Schema added where applicable (use what’s appropriate for the page type)

-

Published + logged (date, owner, what changed)

-

Step 3: Measure what matters (connect ops actions to outcomes)

Measurement closes the loop. If you can’t tie actions to outcomes, you can’t defend prioritization—or know what to repeat.

Minimal KPI set (practical and defensible):

-

Leading indicators: indexation coverage, impressions, average position/rank distribution, clicks/CTR

-

Lagging indicators: organic sessions, conversions, revenue (where applicable)

Most teams also need one operational metric: cycle time (idea → published). It’s your clearest lever for increasing learning loops.

Implementation artifacts:

-

Weekly SEO ops review agenda

-

What shipped (new pages, refreshes, fixes)

-

What changed in performance (wins/losses, anomalies)

-

Top 3 actions for next week (with owners)

-

Blockers (systems, approvals, assets)

-

-

Experiment / change log template

-

URL

-

Hypothesis

-

Change made

-

Date shipped

-

Expected metric movement

-

Observed result (after X days/weeks)

-

Next action (repeat, iterate, revert)

-

Soft next step: If you want to reduce cycle time and make results easier to prove, map your current workflow against Unify → Automate → Measure and identify the single biggest bottleneck in each step.

Operating model: roles, responsibilities, and cadence

Operations fail when ownership is fuzzy. Use a lightweight RACI-style model so each step has one responsible owner and a clear handoff.

Role clarity (simple RACI)

-

Head of SEO / Growth: accountable for targets, prioritization, and reporting narrative

-

SEO lead (or strategist): responsible for briefs, on-page standards, internal linking rules, and QA acceptance

-

Content lead/editor: responsible for editorial quality, review, and consistency across writers

-

Writer: responsible for draft that meets brief and Definition of Done requirements

-

Visual ops/designer (if applicable): responsible for image creation workflow and asset readiness

-

Publisher (may be SEO/content): responsible for CMS formatting, publishing, and final QA

-

Analyst (optional): responsible for measurement cadence, dashboards, and change log hygiene

-

Developer (optional): responsible for technical fixes that require engineering support

Cadence that keeps the gap from reopening

-

Weekly: SEO ops review (ship list + performance shifts + next actions)

-

Monthly: content refresh sprint (update existing winners and fix underperformers)

-

Quarterly: strategy recalibration (what to double down on, what to stop)

Common pitfalls when fixing SEO operations (and how to avoid them)

Over-optimizing for output instead of outcomes

Publishing volume feels productive, but it’s not the same as growth. Guardrail: every ship cycle should include measurement and a refresh loop, not just net-new pages.

Buying tools without changing the workflow

Tools won’t fix unclear ownership, missing SOPs, or inconsistent QA. Guardrail: define your workflow and Definition of Done first, then add systems that reduce manual steps.

No feedback loop between publishing and performance

If performance insights don’t flow back into briefs and updates, the gap reopens. Guardrail: maintain a change log and use it in weekly ops reviews.

Templates & checklists

Jump to: Scorecard • Definition of Done • Weekly Ops Review

1) SEO Ops Gap Scorecard (repeatable)

-

Velocity: 1 (slow, many handoffs) to 5 (fast, standardized, few bottlenecks)

-

Visibility: 1 (silos, manual reporting) to 5 (single source of truth)

-

Verifiability: 1 (outputs only) to 5 (actions tied to outcomes/ROI)

2) Definition of Done (publish QA)

-

Search intent met clearly in the first screen

-

Clean heading structure (H1/H2/H3)

-

Title + meta description finalized

-

Internal links added intentionally (not random)

-

Images included and optimized (file name, alt text where appropriate)

-

Post-publish check scheduled (indexing + early performance)

3) Weekly SEO ops review (30 minutes)

-

What shipped last week (list URLs)

-

What moved (impressions, clicks, rankings, conversions where applicable)

-

What we learned (keep to 3 bullets)

-

Next week’s priorities (3–5 actions, assign owners)

-

Blockers and fixes (systems, approvals, missing assets)

4) Content refresh prioritization rubric

Score pages 1–5 on each dimension. Refresh highest total first.

-

Opportunity: impressions high but clicks/CTR low

-

Proximity: ranking positions 4–20 (close to meaningful gains)

-

Business value: aligned to revenue/conversions (where applicable)

-

Decay: declining traffic over last 3–6 months

-

Effort: quick wins vs. major rewrite

Next steps: choose your path to closing the gap

You have two practical paths:

-

DIY operational upgrade: implement the scorecard, create a Definition of Done, run weekly ops reviews, and build a change log + refresh cadence.

-

Unified operating system approach: if fragmentation and manual steps are the bottleneck, consider a system designed to close the Operations Gap by unifying data, accelerating workflows, and connecting actions to outcomes.

Primary CTA: Close the SEO Operations Gap with Go/Organic

Go/Organic is an SEO Operating System built to close the Operations Gap between content creation and measurable results by focusing on three outcomes: unify your stack, automate your workflow from idea → illustrated → published, and measure what matters with a unified dashboard narrative.

See the SEO Operating System

FAQs

What does “SEO Operations Gap” mean?

It’s the disconnect between SEO work getting done (content, updates, publishing tasks) and being able to reliably produce and prove measurable organic results—usually caused by fragmented tools, manual workflows, and siloed data.

How do I know if my team has an SEO Operations Gap?

Common signs include long time-to-publish, repeated handoffs, inconsistent on-page standards, reporting that takes days, unclear ownership, and difficulty tying specific SEO actions to performance changes.

Is the SEO Operations Gap a content problem or a systems problem?

Most often it’s a systems problem. Content quality matters, but without unified data, a repeatable workflow, and measurement that connects actions to outcomes, teams struggle to scale results.

What’s the fastest way to reduce SEO cycle time?

Map your current workflow end-to-end, remove unnecessary handoffs, standardize a Definition of Done, and automate repeatable steps (drafting, visuals, publishing, and QA) where appropriate.

What should an SEO team measure to prove ROI?

Track leading indicators (indexation, impressions, rankings) alongside lagging indicators (organic sessions, conversions, revenue where applicable), and maintain an experiment/change log so performance shifts can be tied to specific actions.

How often should we run SEO ops reviews?

A weekly ops review is typically the minimum to keep shipping, measurement, and prioritization aligned; pair it with a monthly refresh sprint to systematically update existing content.

Do I need to replace my current tools to close the gap?

Not always. Many teams can improve with clearer SOPs and measurement. The key is reducing fragmentation—either by unifying systems or ensuring your stack behaves like a single source of truth.

What’s the difference between SEO strategy and SEO operations?

Strategy defines what to do and why (targets, positioning, priorities). Operations is how work moves from idea to published to measured—reliably, repeatedly, and with clear accountability.

“