SEO Operations Best Practices: The SEO Operations Playbook

“

SEO Operations Best Practices: The SEO Operations Playbook

Most SEO programs don’t fail because the strategy is wrong—they fail because execution is inconsistent, slow, and hard to measure. This playbook shows you how to close the Operations Gap between content creation and measurable organic results.

What you’ll learn: how to diagnose your SEO ops bottlenecks, standardize people/process/tooling, ship work with less rework, and report outcomes in a way stakeholders trust.

-

How to spot the Operations Gap (and fix it)

-

A maturity model to prioritize your next operational improvements

-

Step-by-step workflows for planning, production, technical SEO, and reporting

-

Copy/paste templates and checklists your team can implement this week

SEO operations best practices (what it is + why it matters)

Definition: SEO ops vs. SEO strategy vs. content marketing ops

SEO strategy defines what you’re going after and why (targets, audience intent, topic map, positioning, priorities). SEO operations defines how work gets done repeatedly (intake, briefs, QA, publishing, change logs, reporting cadence). Content marketing ops is broader—covering editorial operations across channels—while SEO ops is specifically focused on organic search execution and measurement.

If strategy is the map, SEO ops is the operating system that gets you to the destination—reliably.

The common failure mode: the Operations Gap

The villain is the Operations Gap: disconnected tools, manual processes, and data silos that slow teams down and obscure ROI. The result is a familiar loop:

-

Priorities shift weekly because the backlog isn’t credible

-

Publishing takes too long due to handoffs and rework

-

Reporting becomes a monthly scramble, not a decision tool

-

Wins are hard to attribute, so budgets and buy-in weaken

What “good” looks like: reliable velocity + quality + measurable impact

High-performing SEO operations are not “fast at publishing.” They’re fast at producing measured outcomes with consistent quality:

-

Velocity: predictable throughput from brief → publish → iterate

-

Quality: fewer defects (metadata, internal links, schema, indexing issues), fewer rewrites

-

Impact: clear connection between actions (publishes/updates/fixes) and results (traffic, conversions, revenue/pipeline where available)

Quick self-assessment: where your SEO ops are breaking

Symptoms checklist

Use this to identify your most expensive bottleneck. If you check 5+ items, you likely have an Operations Gap problem more than a strategy problem.

-

Slow publishing: a “simple” post takes weeks due to handoffs and approvals

-

Unclear priorities: teams don’t know what matters most this sprint/month

-

Reporting chaos: metrics live in spreadsheets; numbers don’t match across stakeholders

-

Rework loops: pages go back and forth for missing SEO basics (titles, H1s, internal links, images, schema)

-

Technical black box: SEO tickets disappear into dev queues with no SLAs

-

No refresh motion: content decays silently until traffic drops become obvious

-

Attribution fog: you can’t confidently say what changed and what it affected

Maturity model (Level 1–4): ad hoc → standardized → automated → optimized

-

Level 1: Ad hoc. Heroics, tribal knowledge, inconsistent QA, reactive reporting.

-

Level 2: Standardized. Documented SOPs, defined owners, repeatable briefs, consistent QA checks.

-

Level 3: Automated. Routine steps are automated (handoffs, publishing steps, reporting pulls) with clear guardrails.

-

Level 4: Optimized. Feedback loops are tight: measurement drives backlog, refreshes are systematic, and experiments compound learning.

Most teams should aim to get to Level 2 first. Standardization unlocks everything else.

Pick your bottleneck: stack, workflow, or measurement

To move quickly, pick one primary constraint:

-

Stack bottleneck: too many tools, disconnected data, no single source of truth

-

Workflow bottleneck: handoffs and unclear “definition of done” create rework

-

Measurement bottleneck: you can’t connect SEO work to outcomes, so prioritization is guesswork

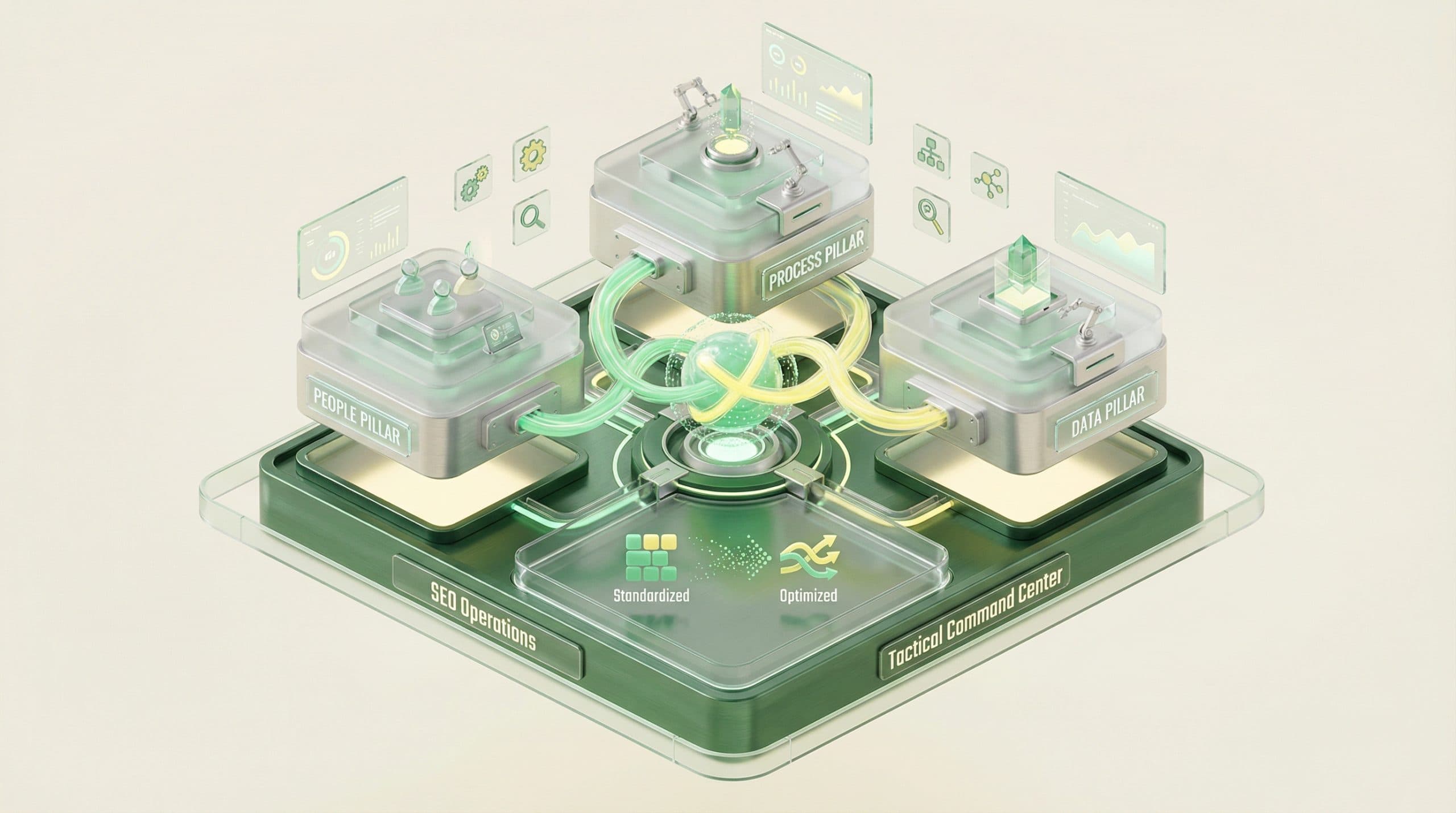

The core pillars of SEO operations (the operating model)

People: roles, responsibilities, and decision rights

SEO ops breaks when ownership is fuzzy. Define decision rights (who decides, who approves, who executes) across these common areas:

-

SEO lead/head of growth: sets priorities, approves roadmap, owns KPI narrative

-

SEO program manager (or ops owner): runs cadence, backlog hygiene, templates, QA gates

-

Content lead/writers: own content drafts, updates, internal linking adherence

-

Design/visuals: image standards, accessibility checks, reusable asset library

-

Engineering: technical fixes, templates, release management, performance

-

Analytics/revops (as applicable): conversion tracking alignment and reporting integrity

Best practice: For every recurring work type (new post, refresh, template change, redirects), assign one Directly Responsible Individual and one Approver.

Process: standardized workflows from idea → publish → update

Processes should be simple enough that the team actually follows them. A workable SEO workflow typically has:

-

Intake and triage (what enters the system)

-

Prioritization (what gets done next)

-

Execution (content and technical)

-

QA and publishing

-

Measurement and learning (annotations, reporting, backlog updates)

Tooling & data: single source of truth and integration principles

Tooling is not about “more.” It’s about clean data flows that reduce manual work and contradictions. Best-practice principles:

-

Single source of truth: one place for backlog + status + outcomes

-

Consistent identifiers: stable URLs/page IDs, taxonomy, naming conventions

-

Connected inputs: CMS + webmaster tools + analytics + ecommerce (if applicable)

Governance: QA, approvals, documentation, and change management

Governance is what prevents “fast” from becoming “messy.” Minimum governance for most teams:

-

Definition of Ready (what a ticket/brief must include before work starts)

-

Definition of Done (what must be true before publishing/shipping)

-

QA gates (pre-publish checks, post-publish validation)

-

Change log discipline (what changed, when, why)

Best practices for SEO planning & prioritization

Build a single prioritized backlog

Create one backlog that includes all SEO work types:

-

Content: net-new pages, refreshes, consolidations, pruning

-

On-page: internal linking, schema, CTR improvements, media

-

Technical: indexation, templates, redirects, performance, crawl issues

-

Ops: tracking fixes, dashboard improvements, SOP updates

This prevents “invisible work” from stealing capacity and makes tradeoffs explicit.

Use a simple prioritization framework (Impact × Effort × Confidence)

Keep scoring lightweight so it’s used consistently:

-

Impact: expected upside on the primary KPI (e.g., conversions, revenue/pipeline where available, or qualified traffic)

-

Effort: total time across roles (writing, editing, dev, approvals)

-

Confidence: how sure you are (data quality, precedent, SERP stability)

Score = (Impact × Confidence) / Effort is often enough to prevent opinion wars.

Related: SEO backlog prioritization framework (Impact × Effort × Confidence)

Define “Definition of Ready” for content and technical tickets

When work starts without inputs, rework becomes inevitable. Require the basics upfront.

Definition of Ready (content):

-

Target query theme and search intent

-

SERP pattern notes (what’s ranking and why)

-

Primary page goal (conversion path or next step)

-

Internal links: required in/out links

-

Brief outline and required sections

-

Owner + due date + reviewers

Definition of Ready (technical):

-

Problem statement and affected templates/URLs

-

Reproduction steps and evidence (crawl sample, screenshots)

-

SEO impact hypothesis and success metric

-

Dependencies and rollout risk

Align SEO roadmap to business goals without overpromising

SEO ops credibility increases when you tie work to business objectives—but avoid fake precision. Use ranges and assumptions, and separate:

-

What you control: pages published, updates shipped, technical fixes released

-

What you influence: rankings, traffic, conversions

-

What you can sometimes attribute: revenue/pipeline (depends on tracking and buying cycle)

Best practices for keyword research & content ops (repeatable production)

Standardize inputs for every piece of content

Most “keyword research” problems are actually brief quality problems. Standardize these inputs:

-

Intent: what the searcher is trying to accomplish

-

SERP pattern: the dominant content type/format that wins

-

Angle: your differentiated approach (examples, templates, data, POV)

-

Internal linking plan: what this page supports and what supports it

-

Conversion path: what happens after the reader gets value

Content brief SOP (required sections, evidence, examples, on-page requirements)

A strong brief reduces editing cycles and ensures SEO essentials aren’t “optional.” Include:

-

Working title + intent statement

-

H1/H2 outline

-

Key points that must be covered (including counterpoints)

-

Required examples, checklists, and “common pitfalls”

-

On-page requirements: internal links, schema needs (if applicable), media notes, FAQs

Related: Content brief SOP template for SEO teams

Editorial calendar that accounts for refreshes (not just net-new)

Operationally, refreshes are often the highest ROI work—but they don’t happen unless scheduled. Calendar best practice:

-

Plan a split between net-new and refresh capacity

-

Create a recurring review queue (monthly/quarterly depending on site size)

-

Define refresh triggers: traffic decay, ranking drops, outdated info, conversion underperformance

Related: Content refresh workflow (how to update and re-publish strategically)

Quality control: E-E-A-T signals, accuracy checks, and brand voice consistency

Quality is an operational system, not a writer trait. Bake QC into the workflow:

-

Accuracy: cite sources, verify claims, update dates when content changes meaningfully

-

Experience: include practitioner steps, screenshots, or real-world caveats where relevant

-

Authority: align to in-house expertise; avoid speculative claims

-

Trust: clear authorship, transparent updates, consistent standards

Best practices for on-page SEO execution (reduce rework)

On-page checklist: titles, headings, internal links, schema, media, accessibility

Use a consistent checklist so basic issues don’t create publishing delays.

-

Title tag: clear promise, intent match, not just keyword stuffing

-

H1/H2 structure: scannable, covers user questions, avoids duplication

-

Internal links: add contextual links to and from relevant hub/cluster pages

-

Schema: apply where appropriate (e.g., FAQ markup when FAQs are present)

-

Media: original/relevant visuals; consistent file naming and compression

-

Accessibility: descriptive alt text, logical heading order

Related: Pre-publish SEO QA checklist (on-page + technical)

Template-driven pages vs. bespoke pages: when to standardize

Standardize pages when you have repeatable patterns (e.g., location pages, product categories, glossary entries). Use bespoke pages for high-stakes topics that require deeper narrative, custom examples, or novel structure.

Best practice: define template requirements once (headings, modules, internal links, schema) and enforce via QA gates.

Image/visual workflow: consistent naming, alt text, and reuse rules

Visuals become an ops tax when there’s no standard. Define:

-

Naming convention (topic + date/version)

-

Alt-text rules (describe function, not just keywords)

-

Reuse policy (what can be reused, when to create new)

-

Storage location and ownership

Pre-publish QA and post-publish validation

Two gates prevent the most common failures:

-

Pre-publish QA: metadata, headings, links, images, schema needs, tracking tags

-

Post-publish validation: page renders correctly, indexability checks, internal links function, canonical is correct

Best practices for technical SEO operations (make it operational, not heroic)

Create a technical SEO intake + triage process with SLAs

Technical SEO often stalls because it competes with product work. Fix this with an explicit intake and triage path:

-

Single intake channel (form, backlog lane, or ticket type)

-

Triage categories (blocking, high impact, maintenance)

-

Service levels (e.g., blocking issues acknowledged within X days; maintenance bundled into releases)

-

Clear acceptance criteria

Related: Technical SEO ops: intake, triage, and SLAs with dev teams

Recurring audits: crawl health, indexation, templates, performance

Don’t wait for traffic drops. Put recurring checks on a calendar:

-

Crawl/indexation: coverage changes, noindex mistakes, robots.txt issues

-

Template health: canonicals, pagination, structured data validity

-

Performance: page speed regressions, heavy media, script bloat

Release management: ship SEO changes safely

SEO fixes can have site-wide impact. Operational best practice:

-

Test on staging where possible (rendering, canonicals, indexability)

-

Roll out gradually for risky template changes

-

Keep a rollback plan

-

Annotate releases so performance changes are interpretable

Documentation: what to log so you can attribute impact later

At minimum, log:

-

What changed (URL pattern/template/module)

-

When it changed (date/time + rollout window)

-

Why it changed (hypothesis)

-

How you’ll measure success (metric + timeframe)

Related: SEO change log template (for attribution and learning)

Best practices for measurement & reporting (connect ops actions to ROI)

Define your KPI stack: leading indicators vs. lagging indicators

Best practice is to measure both execution and outcomes.

-

Leading indicators (ops + early SEO signals): briefs created, pages published, refreshes completed, time-to-publish, indexation rate, crawl errors, CTR improvements

-

Lagging indicators (business outcomes): qualified organic traffic, conversions, and revenue/pipeline where available

Related: SEO reporting dashboard KPIs (leading vs lagging indicators)

Dashboards: what to include

A practical dashboard answers: (1) what did we do, (2) what changed, (3) what do we do next.

-

Visibility: impressions, average position or rank groups, index coverage signals (where available)

-

Demand capture: clicks/sessions from organic

-

Outcomes: conversions; revenue/pipeline where available and trusted

-

Ops throughput: publish/update counts, cycle times, defect rate from QA

-

Notes: annotations for launches, refreshes, template changes

Annotation discipline: track what changed and when

Annotations are the bridge between ops and ROI. Without them, you can’t learn. Make it a required step in your Definition of Done for:

-

New page publishes

-

Meaningful refreshes (not typo fixes)

-

Internal linking pushes

-

Template or technical releases

Attribution expectations: what SEO can and can’t prove

SEO is measurable, but rarely perfectly attributable. Best practice is to be explicit:

-

What tracking exists today (and gaps)

-

What outcomes can be tied to organic with confidence

-

What will be directional (especially for longer sales cycles)

Best practices for workflow automation (increase velocity without sacrificing quality)

Where automation helps most

Automation should remove repetitive steps and handoff friction, not remove accountability. High-leverage areas:

-

Turning standardized briefs into first drafts (with human review)

-

Creating and managing consistent visuals and formatting

-

Reducing publishing steps and manual CMS entry

-

Streamlining reporting pulls and recurring views

Related: Workflow automation for SEO teams (where to automate + guardrails)

Guardrails: QA gates, approvals, and human review points

To automate safely, define explicit gates:

-

Human review for accuracy, brand voice, and compliance

-

Pre-publish QA checklist completion

-

Post-publish validation and annotation

Avoiding “automation debt”

Automation debt happens when SOPs and workflows change, but documentation doesn’t. Prevent it by:

-

Versioning SOPs and templates

-

Reviewing workflows quarterly

-

Keeping one owner responsible for ops documentation

Primary CTA: If manual workflows and disconnected reporting are slowing your team down, a structured pilot can help you unify execution and measurement.

Close the SEO Operations Gap in 30 days

Unify your stack so work and outcomes live in one place

Streamline workflow to increase velocity without losing QA

Establish reporting that ties actions to outcomes

Book a 30-Day Pilot

Best practices for SEO tooling & stack unification (single source of truth)

Principles: fewer tools, clearer ownership, cleaner data flows

The goal isn’t “one tool for everything.” It’s one operational truth for what’s being worked on and what it produced.

-

Minimize duplicate sources of metrics

-

Clarify who owns each dataset and dashboard

-

Standardize naming conventions (campaigns, page types, categories)

What to connect first: CMS + webmaster tools + ecommerce (if applicable)

To close the Operations Gap, connect the systems that govern execution and signals:

-

CMS: where pages are created and published

-

Webmaster tools: where search visibility and indexation signals live

-

Ecommerce/transaction layer: where revenue signals may exist (if relevant)

Data hygiene: naming conventions, page IDs, taxonomy, consistent tracking

Data hygiene is the unsexy multiplier. Best practices:

-

Stable URL rules and redirect standards

-

Clear taxonomy (categories, tags) that matches how you plan topics

-

Consistent conversion tracking definitions (what counts as a lead, signup, purchase)

Related: SEO tooling stack unification (single source of truth principles)

Operating cadence: meetings, rituals, and SLAs that keep SEO moving

Weekly: backlog grooming + production standup

-

Confirm priorities and remove blockers

-

Review throughput and cycle time (where did work stall?)

-

Ensure every item has owner, next step, and due date

Biweekly/monthly: performance review + refresh planning

-

Review winners/losers and decide actions (refresh, consolidate, expand, prune)

-

Check indexation and technical health trends

-

Update the next month’s content + refresh plan

Quarterly: roadmap reset + technical debt review

-

Re-score backlog using Impact × Effort × Confidence

-

Review technical debt and template risks

-

Align SEO initiatives to business goals and constraints

Cross-functional SLAs: content, dev, design, and growth

SLAs don’t need to be legal documents. They need to be explicit expectations that prevent silent queue death:

-

Response time for new tickets

-

Standard turnaround windows (by priority)

-

Approval timelines and escalation paths

Templates & checklists (copy/paste)

1) SEO ops scorecard (maturity + bottlenecks)

Score each 1–4 (Ad hoc → Optimized):

-

Backlog and prioritization

-

Content brief quality and consistency

-

QA gates (pre + post publish)

-

Technical intake + SLAs

-

Change logging and annotations

-

Dashboard credibility (one set of numbers)

-

Refresh program

Related: SEO ops maturity model (levels + scorecard)

2) Content brief template (minimum viable)

-

Primary keyword/theme:

-

Search intent:

-

SERP pattern notes:

-

Angle/differentiation:

-

Audience + stage:

-

Outline (H2s/H3s):

-

Required examples/checklists:

-

Internal links (in/out):

-

CTA path:

-

QA requirements: title/meta, headings, schema need, media, accessibility

3) Pre-publish QA checklist (quick)

-

Title tag and meta description present and accurate

-

One H1; headings are logical and scannable

-

Internal links added (hub + relevant clusters)

-

Images named appropriately; alt text added

-

Schema applied where appropriate

-

Page is indexable; canonical is correct

-

Conversion path exists (next step is clear)

4) SEO change log template

-

Date:

-

Change type: publish / refresh / internal links / template / redirect / other

-

Scope: URLs affected

-

Hypothesis:

-

Success metric:

-

Owner:

-

Notes/risks:

5) Reporting template (KPIs + insights + next actions)

-

What we shipped: publishes, refreshes, fixes

-

What moved: visibility, traffic, conversions, revenue/pipeline where available

-

What we learned: winners/losers + why

-

What we’ll do next: top priorities and expected impact

Common pitfalls (and how to fix them)

Publishing faster but not measuring impact

Fix: Make change logging and dashboard review part of “done.” If it isn’t measured, it isn’t finished.

Measuring everything but not changing behavior

Fix: Tie metrics to decisions. Every report should end with a prioritized next-action list.

Too many tools, no owner

Fix: Assign tool and dashboard owners. Consolidate where possible and standardize definitions.

No refresh process (content decays silently)

Fix: Schedule refresh capacity and define triggers. Treat updates as first-class backlog items.

SEO depends on heroics instead of systems

Fix: Standardize Level 2 basics: definitions of ready/done, QA checklists, and a weekly cadence. Automation comes after consistency.

How Go/Organic approaches SEO operations (closing the Operations Gap)

Go/Organic is positioned as The SEO Operating System: built to close the Operations Gap between content execution and measurable results by unifying workflows and measurement into a more operational model.

Unify your stack (single source of truth)

Operationally, unification starts with connecting where work ships and where signals live. Go/Organic supports two-way integrations including WordPress and WooCommerce, and connects with Bing Webmaster Tools. Optional connections may include Google Search Console and Shopify (availability depends on setup).

Automate your workflow (Velocity Engine™ with guardrails)

To increase velocity, Go/Organic’s narrative is a Velocity Engine™ from idea → illustrated → published in minutes, supported by content and visual operations workflows. Best practice still applies: keep human review and QA gates to protect accuracy and brand consistency.

Measure what matters (connect ops actions to ROI)

The goal of SEO ops measurement is simple: connect what you shipped to what changed. Go/Organic’s approach emphasizes a unified dashboard that ties operational actions to outcomes and ROI where data is available.

Secondary CTA: Want a reference model for how an SEO team can run on an operating system (not a collection of disconnected tasks)?

See what an SEO Operating System looks like

Unify data, content, visuals, and publishing into one workflow

Use it as a blueprint to reduce rework and improve reporting credibility

Explore the SEO Operating System

Frequently asked questions

What is SEO operations?

SEO operations is the set of people, processes, tools, and governance that turns SEO strategy into repeatable execution—so publishing, optimization, and technical work happen consistently and can be measured.

What are the most important SEO operations best practices?

Standardize your backlog and prioritization, document SOPs for content and technical work, implement QA gates before publishing, and build reporting that connects actions (publishes/updates/fixes) to outcomes (traffic, conversions, revenue/pipeline where available).

How do you measure SEO operations success?

Track both velocity and outcomes: production throughput (briefs → published), time-to-publish, refresh cadence, indexation/crawl health, and business KPIs like conversions and revenue/pipeline where your analytics allow.

What’s the difference between SEO strategy and SEO operations?

Strategy decides what to do and why (targets, positioning, topics). Operations ensures it actually gets done reliably (workflow, ownership, QA, tooling, reporting).

How do you prioritize SEO work in a backlog?

Use a simple scoring model like Impact × Effort × Confidence, and require a definition of ready so tickets include intent, target page, success metric, and dependencies before work starts.

How often should you refresh existing SEO content?

Set a recurring review cadence (often monthly/quarterly depending on site size) and prioritize refreshes based on decaying traffic, ranking drops, outdated information, or conversion underperformance.

What should be in an SEO reporting dashboard?

A practical dashboard includes visibility (rankings/coverage), demand capture (clicks/traffic), outcomes (conversions and revenue/pipeline where available), and operational metrics (publishing velocity, refreshes, technical fixes shipped), plus annotations for changes.

How can teams automate SEO workflows without losing quality?

Automate repetitive steps (formatting, publishing steps, reporting pulls) but keep human review and QA gates for accuracy, brand voice, and compliance—then document the workflow so it stays consistent.

“